This is the fourth article in a series about the future of the book. In the first article, I argued that text survives as a knowledge technology — because sequential language maps to how thinking works. In the second, I examined what happens when anyone can write a book. The third explored how readers decide what to read next when the flood of content overwhelms every filter. Now comes the question I've been circling the longest: what happens to deep reading itself when shortcuts are everywhere?

The arguments are getting louder on both sides.

Cognitive neuroscientist Maryanne Wolf warns that deep reading is the last defense against intellectual decline — that the brain circuits for concentration, empathy, and inference are atrophying as we offload more thinking to machines. Nicholas Carr has spent fifteen years making essentially the same case: that screens and now AI are replacing deep reading with skimming, flattening intelligence in the process. Cal Newport observes that AI users spend less time in deep, focused work while intensifying shallow tasks. A recent PNAS Nexus study found that across seven experiments, people who used LLM summaries developed shallower, less original knowledge structures than those who searched and integrated information themselves.

"When we use AI to read for us, what do we gain? What do we lose? Uncritically adopting an AI to read for you is far more dangerous a threat to knowledge acquisition than using AI to write for you." Marc Watkins, educator and AI literacy researcher

On the other side, the optimists see something different. Tyler Cowen, one of the most prolific readers alive, now treats AI as a reading companion — asking it questions while he reads, turning books into dynamic dialogues. Ethan Mollick, at Wharton, runs classroom experiments showing AI can augment reading without replacing it. Alan Chan argues that AI's most underrated use isn't learning faster — it's becoming capable of learning knowledge that's deeper, harder, and more abstract than you could manage alone.

"The most underrated use of AI isn't learning faster — it's becoming capable of learning knowledge that's deeper, harder, and more abstract." Alan Chan, co-founder of Heptabase

Both sides have evidence. Both are partly right. But both share a blind spot: neither side defines what "knowing" actually means when correct answers and summaries are cheap.

This matters, because without that definition, you can't tell whether a shortcut is helping or hurting. You need a standard.

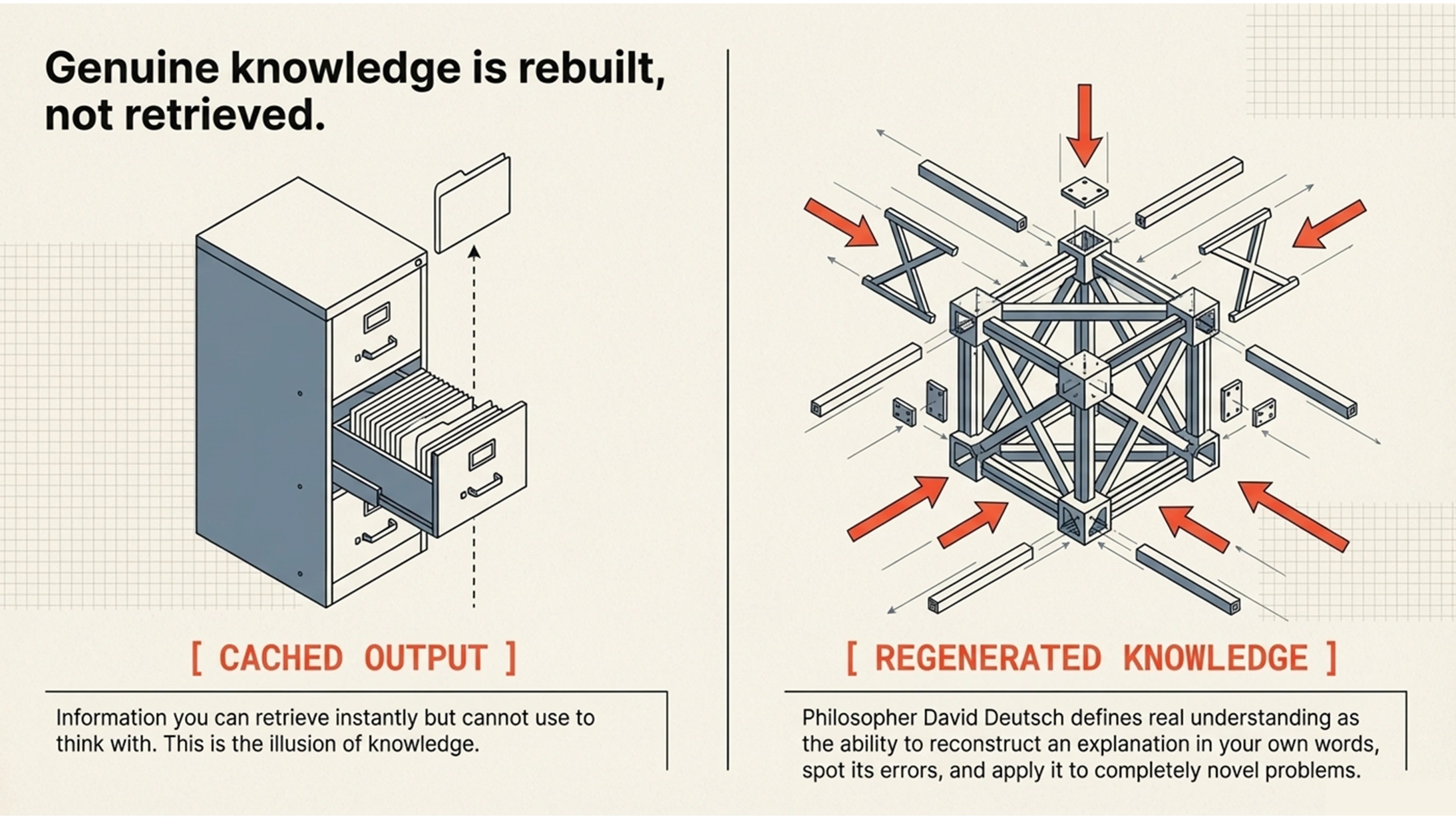

The philosopher David Deutsch provides one. In The Beginning of Infinity, he argues that genuine knowledge isn't something you receive — it's something you regenerate. When you truly understand an explanation, you can reconstruct it in your own words, spot errors in it, apply it to new problems. Anything less is what Deutsch would call cached output — stored answers you can retrieve but not actually use to think with. Throughout this series, I've defined a book as a technology for entering another mind — a structured journey through someone's thinking, designed to rebuild an understanding in yours. The question for consumption, then, is precise: does the shortcut still let you rebuild the understanding? Or does it just give you a prettier version of cached output?

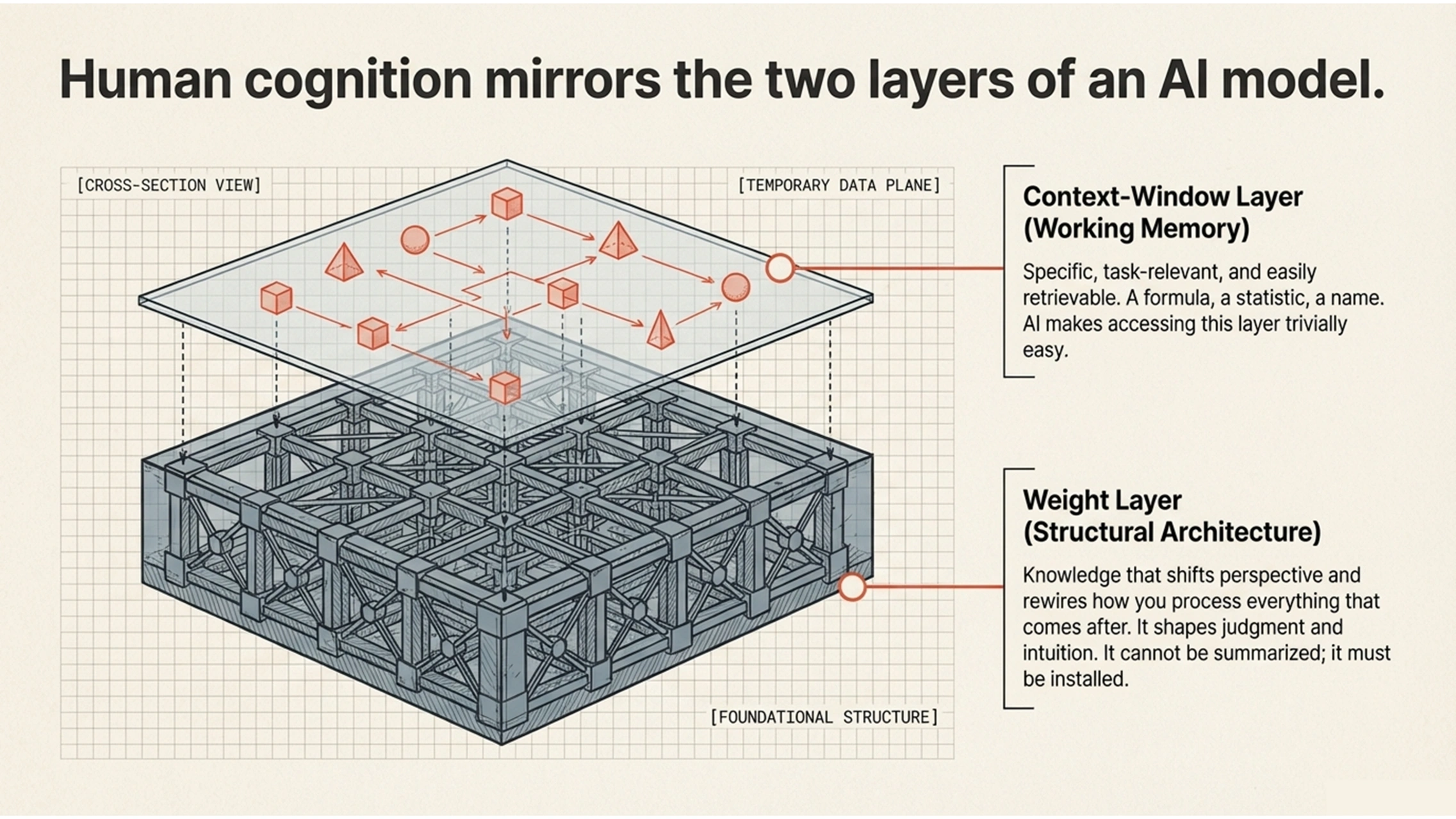

There's a useful analogy from the world of AI itself. Andrej Karpathy — former head of AI at Tesla, co-founder of OpenAI, and one of the most respected voices in the field — describes two kinds of knowledge inside a large language model. The knowledge baked into the model's parameters during training is like a vague recollection of something you read months ago: roughly right, but unreliable in its details. The knowledge in the context window — the information you actively feed the model right now — is like working memory: vivid, precise, ready for immediate reasoning.

This maps onto something deeper about human knowing. Some knowledge lives at what we might call the weight layer. It changes how you process everything that comes after. A book that genuinely shifts your perspective on the world doesn't just add information to your mental filing cabinet — it rewires how you think. No matter what new situation you encounter afterwards, you produce different responses, different judgments, different questions, because that book changed your underlying architecture. This is weight-layer knowledge. It's less precise than a memorized fact, but it's structural. It shapes everything.

Other knowledge lives at the context-window layer. It's specific, retrievable, task-relevant. The name of a technique. A statistic you need for an argument. A formula you apply to a calculation. This kind of knowledge is enormously useful, and it's the kind that AI makes trivially easy to access.

Both layers are necessary. You can't outsource everything to lookup — and you can't operate purely on perspective without concrete knowledge to reason with.

We still teach calculus even though calculators are everywhere. Not because students will do long division by hand for the rest of their lives, but because mathematical thinking changes your weights. It restructures how you approach problems. The calculator handles the context-window work — the specific computation. But without the underlying mathematical intuition, you don't even know which computation to run, or whether the result makes sense.

The same holds for navigation. GPS fills your context window with turn-by-turn directions. You arrive at your destination. But you haven't built a spatial understanding of the territory. You can't orient yourself when the technology fails. Map reading — that older, slower, more effortful skill — changes your weights. It gives you a relationship with the territory that no set of instructions can substitute.

The pattern holds for reading. AI summaries and chat-based book queries are raising the floor of knowledge access. Everyone can now get a competent overview of any topic in seconds. But the ceiling — the kind of understanding that changes how you think, that lets you generate new insights — still requires deeper engagement. The question is whether you know which layer you're operating at, and whether you've chosen the right tool for the job.

Here's where the conventional framing breaks down. The debate is usually staged as "book vs. AI summary" — as though these are two competing products and you have to pick one. But that misses what's actually happening.

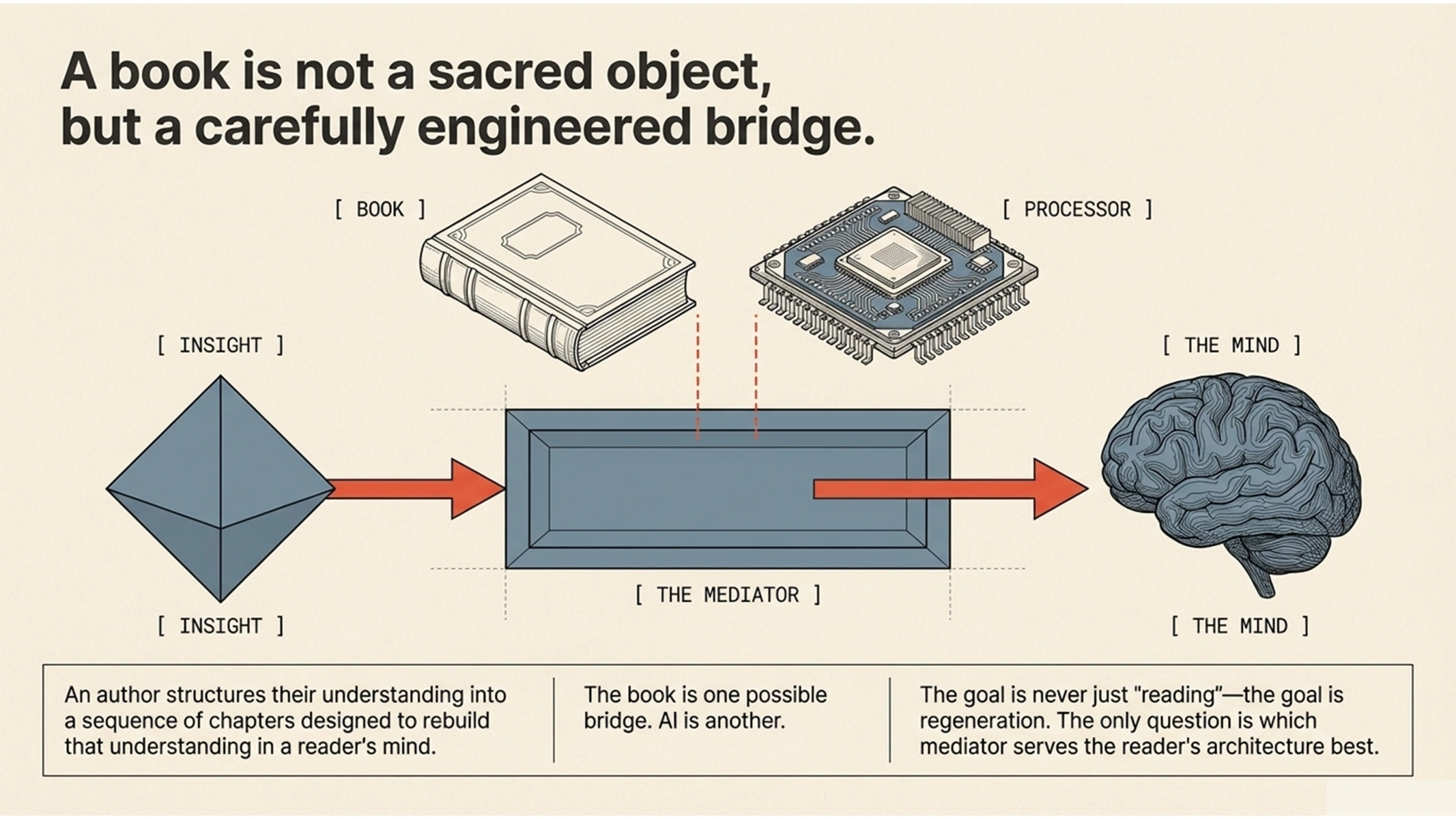

A book is not a sacred object. A book is a mediator — one possible bridge between an insight and the mind of a reader. An author who has spent years thinking about a topic, building experience and credibility, structures their understanding into a sequence of chapters designed to rebuild that understanding in someone else's mind. The book is their best attempt at mediation. But it's not the only possible one.

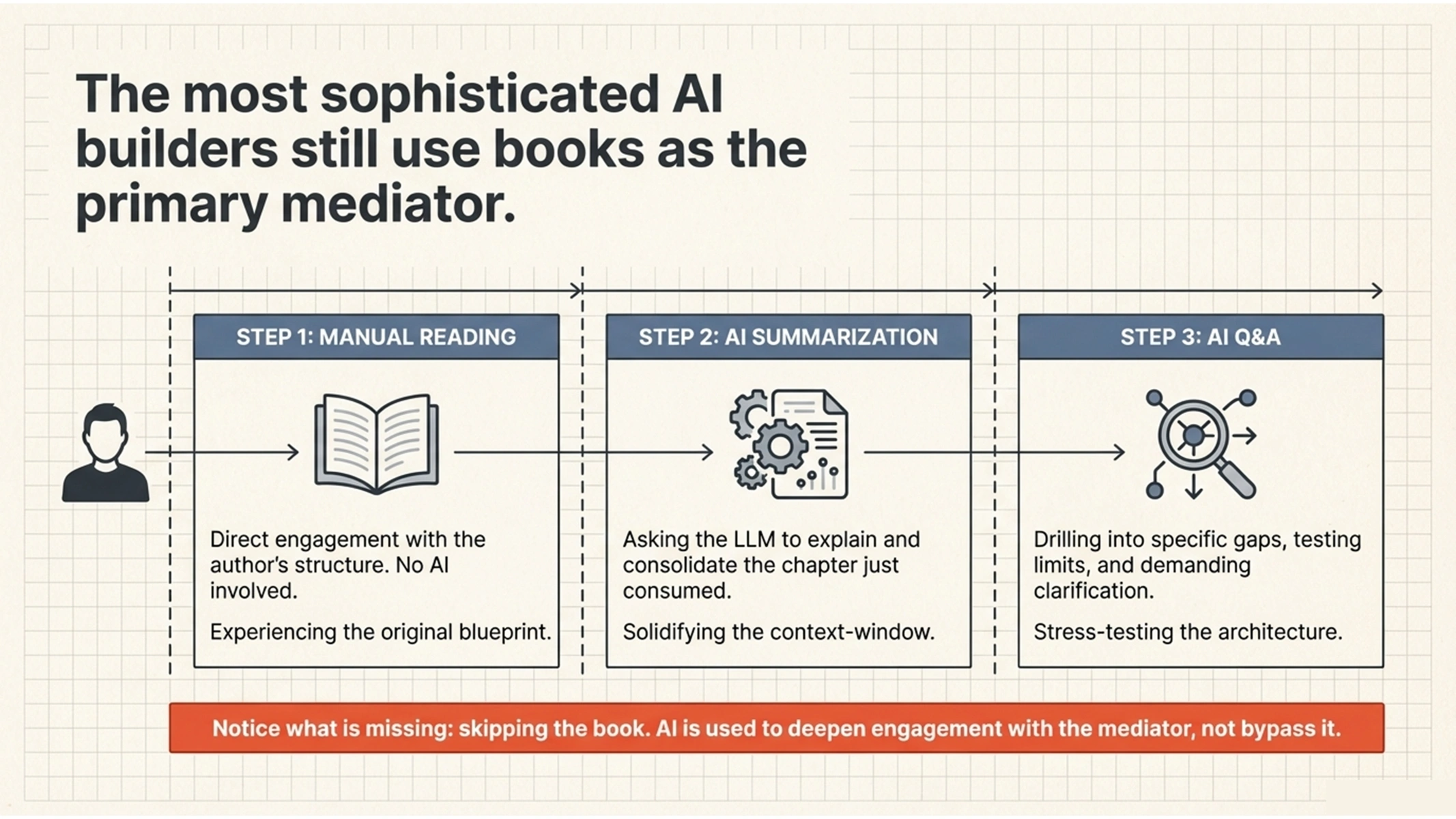

Karpathy himself illustrates this tension beautifully. In November 2025, he shared a reading workflow that has become one of his primary uses for AI: a three-pass method. Pass one is manual reading — he reads the chapter himself. Pass two: he asks the LLM to explain and summarize what he just read. Pass three: Q&A, where he drills into the parts he didn't fully understand. He built an open-source tool called reader3 specifically to make this process frictionless.

Notice what he does not do. He does not skip the book. He starts with manual reading. He engages with the author's structure first. The AI enters only after he has done the work of direct engagement. Even one of the most sophisticated AI practitioners alive treats the book as the primary mediator — and uses AI to deepen what the book started.

But then Karpathy says something else. In the same post, he predicts a future where writers increasingly think less about writing for humans and more about writing for LLMs — because once an LLM understands the idea, it can personalize and serve it to each individual reader. The book becomes input for the AI, which becomes the actual mediator between the insight and the person.

These two positions — reading the book yourself first, and having the AI read it for you — sit in direct tension. And that tension is the most honest map of where we actually are.

The resolution isn't to pick a side. It's to recognize that the goal is always regeneration of understanding. The question is which mediator — book or AI or both — best enables that specific reader to do that regeneration. Neither is universally superior.

When there's a good match — the book's level fits your background, your interest aligns with what the author offers, and the quality of the thinking is worth surrendering to — the book is the most powerful vehicle for regenerating that understanding.

When there's a mismatch — the book is too basic, too advanced, or only partially relevant to your question — an AI mediator can genuinely help. It can extract what's relevant, explain what's unclear, skip what you already know. In those cases, an additional layer of mediation isn't laziness. It's the right decision.

And sometimes — as Karpathy's own practice demonstrates — you need both. The book first, to encounter the author's full thinking. Then AI, to consolidate, clarify, and push your understanding further.

But here is the part that gets lost in the efficiency argument. Even when the book is the right mediator — even when you read the full text, cover to cover — the reading itself is not the learning. Reading is consumption. Learning is reconstruction.

When you read a full book, the content arrives unpersonalized. The author wrote for an imagined audience, not for you specifically. Even a great match between reader and book is still not fully targeted to your situation, your questions, your existing knowledge. The work of turning consumption into understanding is precisely the work of personalizing what you consumed. What does this mean for my life? How does this connect to what I already knew? What question was I carrying before I picked up this book? What are my own examples, my own analogies for what the author is describing?

This is the bridge between context-window knowledge and weight-layer knowledge. When you generate your own connections, your own applications, your own reformulations of someone else's ideas — that's when the knowledge starts to change your weights. That's when the book stops being something you read and becomes something that changed how you think.

For a deeper exploration of this process — the specific techniques for turning what you've read into lasting understanding — I've written extensively about how to remember what you read and about the critical difference between the book you read and the book you query.

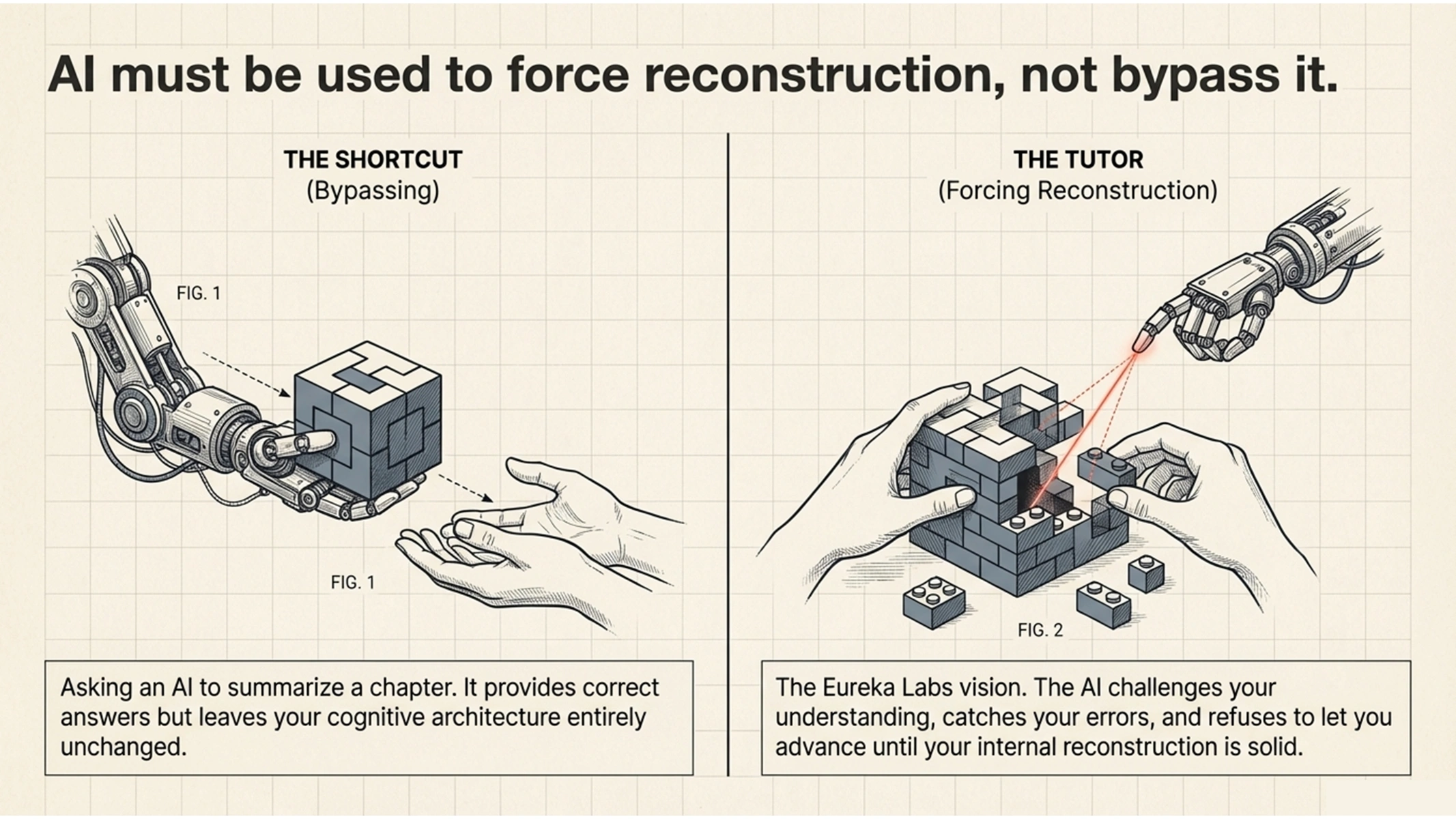

AI can play a powerful role here — but only if it's used to force reconstruction rather than bypass it. There's a world of difference between asking an AI to summarize a chapter for you (bypassing) and asking an AI to challenge your understanding of a chapter you just read (forcing reconstruction). Karpathy's three-pass method works precisely because the AI enters in reconstruction mode, not in substitution mode. The Eureka Labs vision — Karpathy's AI education company — captures this well: imagine studying physics with Feynman guiding you step by step. The power of that vision isn't that Feynman explains things clearly. It's that a great tutor forces you to rebuild the understanding yourself, catching your errors, filling your gaps, refusing to let you move on until the reconstruction is solid.

The difference between AI-as-shortcut and AI-as-tutor is whether it does the reconstruction for you or makes you do it yourself.

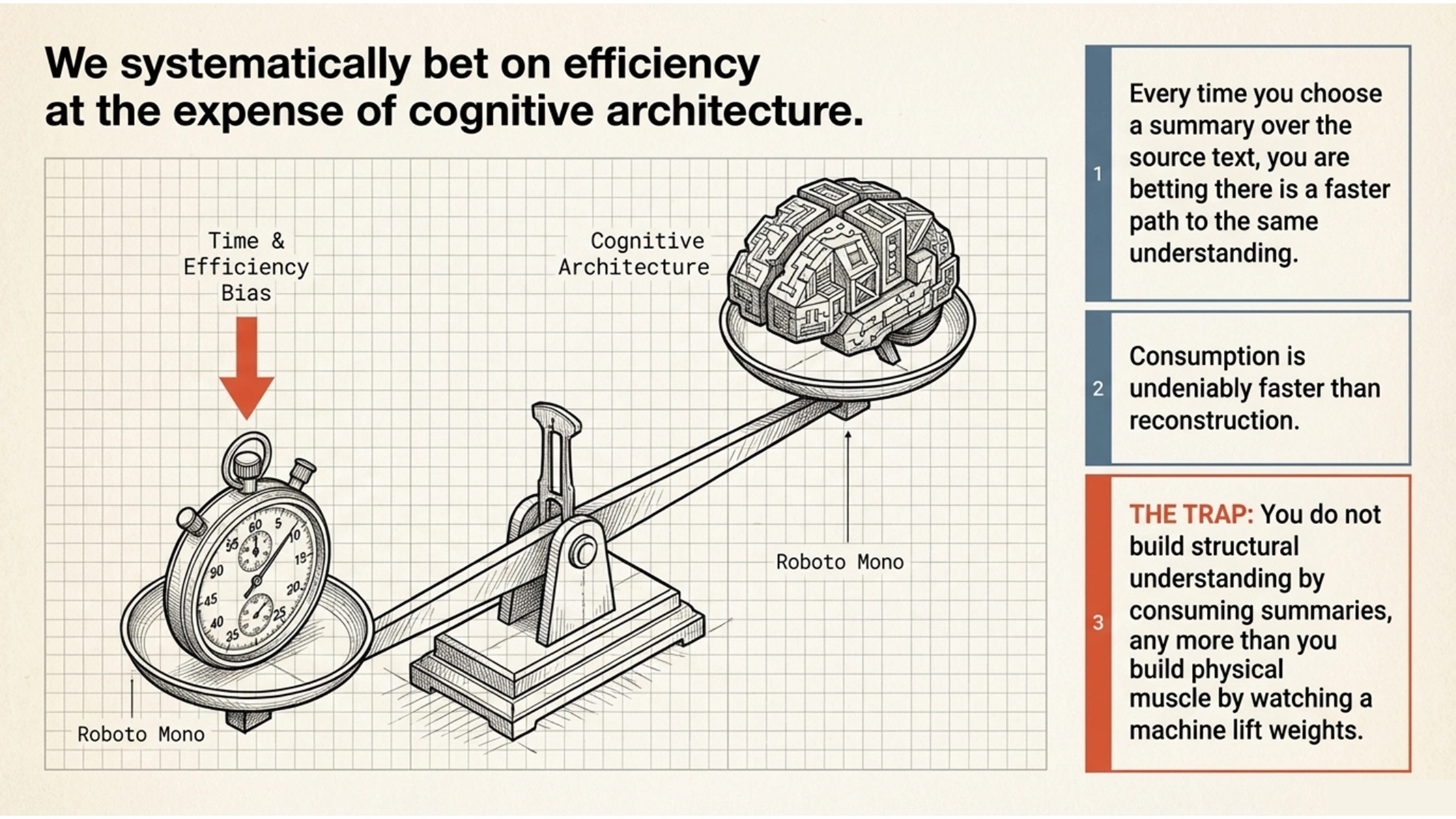

Every time you reach for a summary instead of reading the book, you are making a bet. You are betting that the author — who spent months or years building the experience, the credibility, and the carefully structured argument to serve as the best possible mediator for this knowledge — is not the best mediator for you. Sometimes that bet is correct. The mismatch is real.

But there is a systematic bias in how most people make this bet, and it runs toward efficiency. It is quicker to read a summary — quicker to consume, that is. But consumption isn't learning. The reconstruction has to happen in your own mind. And for that reconstruction, a quick summary is almost never the best starting point.

Before you skip the book, ask yourself: is this context-window knowledge — something specific I need to retrieve and use — or weight-layer knowledge, something that could change how I think? And am I choosing the faster path because it's genuinely better for reconstruction, or because reconstruction is hard and I'd rather skip it?

Deep reading survives. Not because books are sacred, but because some knowledge needs to change your weights. And the only way to change your weights is to do the reconstruction yourself — to take someone else's thinking and rebuild it, piece by piece, in the architecture of your own mind.

No shortcut can do that for you. But the right tools, used the right way, can make sure you don't do it alone.

Next in this series: The Future of the Book: Social — What Happens to Books as Shared Reference Points?

Ready to shape the future of DeepRead? Join the co-development community.

What you get:

Your committment: