In the first article of this series, I argued that text — structured, sequential language designed to rebuild understanding in the reader's mind — survives as a format. Not out of nostalgia, but because it maps to something fundamental about how thinking works and how knowledge travels between minds.

Now we move one link down the chain. If the format endures, what happens to the production of that format when the barrier to creating it drops to near zero?

For most of human history, writing a book required a rare combination: the ideas, the education, the skill to structure an argument in prose, and the sheer stamina to sit alone with a manuscript for months or years. That combination acted as a filter. Not a perfect one — plenty of brilliant thinkers never wrote books, and plenty of mediocre ones did — but a filter nonetheless. It kept the total number of books manageable, and it meant that the act of producing a finished text was itself a signal of at least some level of commitment and capability.

That filter is dissolving. AI can now take a rough set of ideas — spoken aloud, jotted in notes, scattered across conversations — and produce polished, structured text. Not perfect text, not necessarily good text, but text that looks and reads like a real book. The execution barrier has dropped from years to weeks, from deep expertise to basic literacy.

So what happens next?

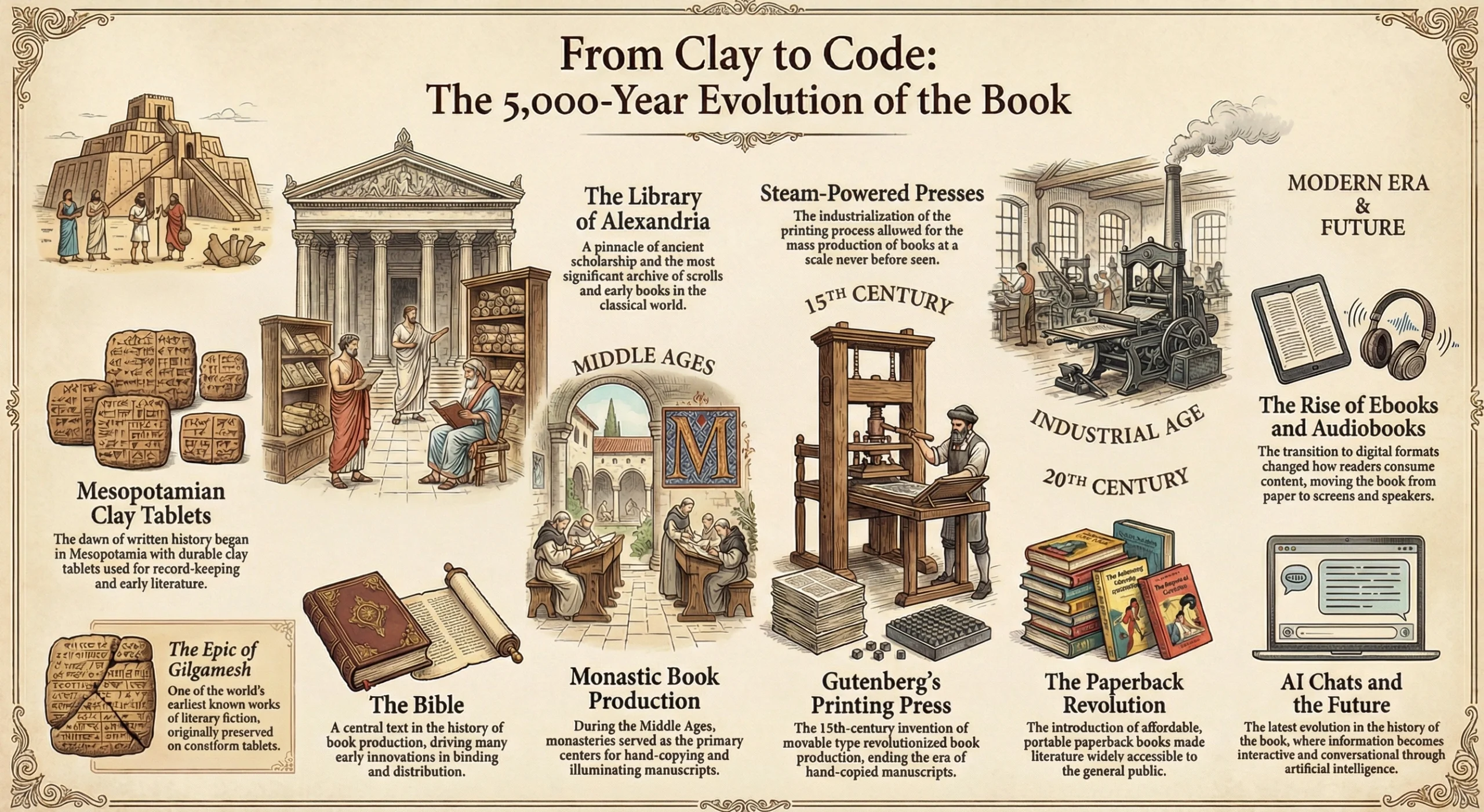

Before Gutenberg's printing press, every book was copied by hand. Scribes — monks, scholars, trained professionals — decided which texts were worth reproducing. That was the filter. It meant that only works deemed universally important got copied: the Bible, Aristotle, medical treatises. The economics of hand-copying simply didn't allow for niche interests.

When printing arrived in the fifteenth century, the fears were remarkably familiar. A flood of low-quality material. Loss of authority. Anyone can publish now — how will we find what's good? The establishment worried that the wrong ideas would spread unchecked.

And what actually happened? Both fears came true and both hopes came true, simultaneously. There was more nonsense in circulation, yes. But there were also local histories, practical manuals, niche philosophical arguments, pamphlets about very specific grievances. The Reformation. The Scientific Revolution. Widespread literacy. Topics that no scribe would have prioritized suddenly had a voice. Knowledge that was locked inside practitioners' heads — because the copying barrier kept it there — could finally reach others.

We are at a similar inflection point. The numbers tell the story: Amazon's Kindle Direct Publishing platform alone processes roughly 1.4 million self-published titles per year. In 2024, the publishing platform Draft2Digital reported volumes trending about 50 percent higher than usual. By some estimates, one in ten new self-published books by late 2025 had significant AI contribution — up from essentially zero in 2022. A 2025 survey of over 1,200 authors found that nearly half were already using generative AI in their work.

The flood is here. And just like after Gutenberg, the most interesting consequence isn't the volume itself — it's what gets written that wouldn't have been written before.

Someone with deep expertise in traditional Japanese joinery, or small-scale urban farming, or the history of a specific regional dialect — someone who thinks about this every day but would never sit down to write a 300-page book — can now produce that text. The world gets access to knowledge that was previously inaccessible, not because it didn't exist, but because the writing barrier kept it locked inside the heads of practitioners.

The execution barrier dropping doesn't just mean more of the same. It means entirely new categories of knowledge entering the written record.

And the formats diversify, too. The same set of ideas can now easily become an article, a book chapter, a lecture script, a conversation. Creators are no longer locked into whichever format they happened to be skilled at producing. A brilliant workshop teacher who could never organize her knowledge into a written book can now do it. A researcher whose insights lived only in conference talks and lab notebooks can turn them into accessible prose.

We can already see how this connects to the way successful books work today. The typical non-fiction book now sits at the center of an ecosystem — a website with case studies, a podcast, a community, social media channels. Sometimes the community comes first and the book follows; sometimes the book sparks the community. These channels intermingle, and the more niche the topic, the more this ecosystem matters. AI makes all of these channels easier to manage, which means more niche communities can sustain themselves, which means more specialized knowledge finds its audience.

There's a progression worth noticing. The pen and paper made writing possible. The typewriter made it faster. The word processor let you rearrange and edit without rewriting. Each of these removed a mechanical barrier. But AI crosses a different threshold. It doesn't just handle the mechanics of putting words on a page — it handles the structural intelligence of writing. Grouping ideas that belong together. Finding a logical sequence for an argument. Maintaining consistent tone across thousands of words. Formulating individual sentences that hit the right register.

This is the part that used to require not just effort, but a specific kind of skill. And it's the part that blocked a lot of people who had genuine ideas but couldn't get them into coherent form.

I know this personally. I'm one of those people who has always struggled with the blank page. I'll have an idea, sit down to write, draft a first sentence, immediately start editing it, lose the thread of the original thought, and sometimes lose the whole idea entirely. My ambition for quality — the urge to get it right from the start — has always been both a driver and a blocker.

What I've found is that AI dissolves the wall between having ideas and producing a finished text — not by thinking for me, but by letting me separate the stages of creation that I used to collapse into one painful process. I can sit down with ten free minutes during a busy weekend with my family, record my thoughts as a voice note, and know that this raw material will be structured and shaped later. I can brainstorm without editing. I can generate without judging. The knowing that an LLM will keep everything and produce a coherent first draft is what makes it possible to focus on the part that actually matters — the ideas themselves.

And here's the thing I keep discovering: when I start a new topic, I often begin with the nagging feeling of having nothing to say. But once I start talking, the ideas flow. And when the article is finished, I realize that almost all of the substance came from me. The AI structured it, asked questions that sparked further thoughts, and formulated the prose — but no new content came from the machine. It was all already in my head, waiting for a process that could get it out.

This is exactly the fault line in the current debate about AI and writing. Productivity expert Tiago Forte recently shared that Claude now drafts 90% of his content in his own voice and style, saving him hours per piece. The backlash was immediate and sharp. One reply captured the sentiment perfectly: "If someone didn't put in the time to write something themselves, why should I put in the time to read it myself?"

That's a powerful objection. I feel it myself. When I see content that's obviously AI-generated, something in me resists — why invest my attention in something no human invested their effort in?

But then I notice something: every time I have a conversation with an AI, I read its responses without hesitation. Nobody "wrote" those words either. No human sat down and crafted that specific paragraph for me. And yet I read it, engage with it, learn from it — because it's personalized, specific to my question, relevant to my situation. The "nobody bothered writing it" objection collapses the moment you realize that the value was never in the labor of writing itself. The value is in the thinking behind it.

One of the more thoughtful responses in the Forte debate put it precisely: "The subtle thing in your message is: created WITH AI and not BY AI." That distinction is everything. Created with AI means a human did the thinking, the selecting, the judging of what matters — and the machine handled the execution. Created by AI means nobody did the thinking at all. The first can be excellent. The second is slop.

So the execution barrier drops, more people write, the range of topics expands, niche knowledge enters the written record, and creators can focus on ideas rather than prose mechanics. All good. But the uncomfortable question remains: does any of this make the best books better?

"Of all that is written, I love only what a person hath written with his blood. Write with blood, and thou wilt find that blood is spirit."

— Friedrich Nietzsche, Thus Spoke Zarathustra

Nietzsche is pointing at something that AI can't provide: the inner fire. The obsessive drive to wrestle with an idea until it yields something true. The personal stake — the willingness to put your own thinking on the line, to risk being wrong, to spend years chasing an explanation that might not work. That intensity isn't about the mechanics of writing sentences. It's about caring enough to push past the obvious answer.

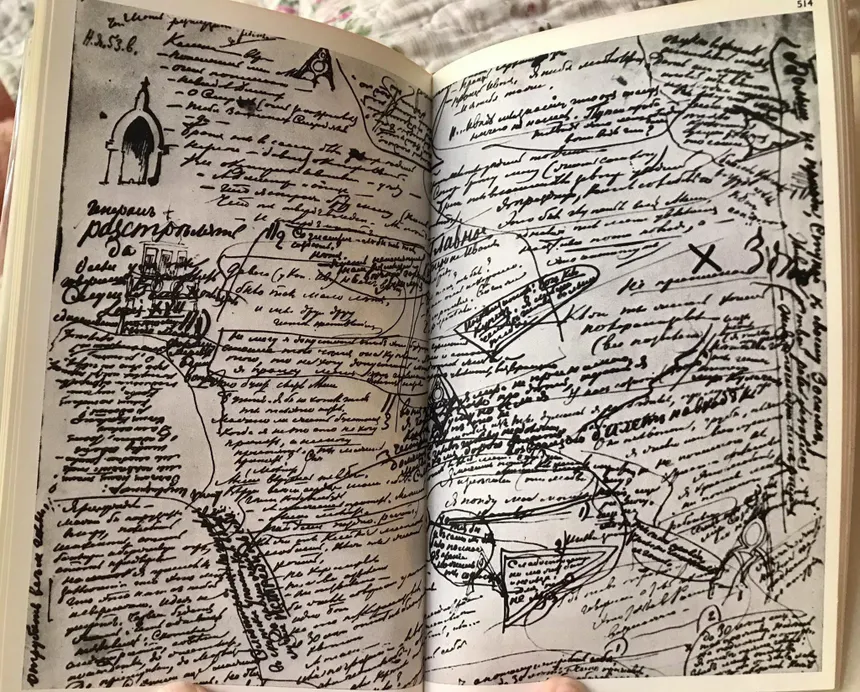

Consider Dostoevsky. He was not just a man with brilliant ideas who happened to be good at putting words on paper. His genius was unified — the ideas, the characters, the language, the structure, the psychological insight all fed each other. The friction of writing was part of his creative process. The struggle with a particular passage, a transition, a character's motivation — that struggle generated insights that wouldn't have appeared any other way.

I experienced this myself, on a much smaller scale, during my academic work: spending nights wrestling with a single transition between paragraphs, only to realize that the difficulty pointed to a gap in my analysis that needed a whole new section.

Would Dostoevsky with AI produce more? Almost certainly — he had far more ideas than he could execute in a lifetime. Would the additional output reach the same ceiling of quality? Possibly, for some works. Would it surpass what he actually achieved? I doubt it. The ceiling of what's possible in a book seems to depend on something AI doesn't have and can't provide — the depth of a mind fully committed to an idea.

And here's what makes this more than speculation. Think about what AI has had access to for three years now. Virtually all of human knowledge encoded in text. Every scientific paper, every philosophical argument, every novel, every mathematical proof — available to a system that can process it all at once and find patterns across disciplines that no human mind could hold simultaneously. Enormous energy, talent, and capital have gone into making these systems more capable. They are advancing fast, becoming genuinely useful across countless domains.

And yet: no AI has produced an original explanation for consciousness, or free will, or the nature of creativity. No AI has written a novel that anyone would place alongside The Brothers Karamazov. No AI has proposed a new scientific theory that reshapes how we understand the world. In science, AI can compress years of hypothesis-testing into days — researchers at Imperial College London found that Google's AI co-scientist produced in days the same hypothesis their team spent years developing. A mathematician at UCLA spent twelve hours in dialogue with an AI and proved something new about optimization. But in both cases, the human provided the creative direction. The AI accelerated the work within a framework that a human mind established.

AI accelerates within known frameworks. It does not generate the frameworks themselves. That's a telling limitation, and it has direct implications for the future of books. The works that matter most — the ones that change how we think — are precisely the ones that establish new frameworks. And those still require a human mind writing with blood.

So the picture that emerges is not the utopian "everyone writes masterpieces now" and not the dystopian "everything is slop." It's more nuanced than either.

The quantity of books will continue to increase dramatically. The range and specificity of topics will expand — knowledge that was previously locked inside practitioners' heads will enter the written record, and that is genuinely good. The feedback loop between idea and published text will compress, allowing faster iteration and tighter connections between authors and their communities. The ecosystem around books — podcasts, discussions, courses, social channels — will grow richer, especially for niche topics.

But the upper end of the quality spectrum — the books that provide genuinely new explanations, that establish new ways of seeing the world — those will not obviously improve. AI lets more thinkers get their ideas into writing, which means a few people who would otherwise have been blocked might produce remarkable work. But the truly exceptional book has always required more than ideas and more than execution. It requires the kind of total commitment that Nietzsche called writing with blood — and that remains as rare and as human as it ever was.

And this creates a new problem. When the filter of execution difficulty is removed, and the volume of published material multiplies, a different question becomes urgent: how do you know which books to trust? When anyone can produce a polished text, how do you distinguish the author who wrestled with ideas for years from the one who prompted an AI for an afternoon? That's no longer a question about creation. It's a question about authority. And it's where this series goes next.

Next in this series: The Future of the Book — How Do You Trust a Book When Anyone Can Write One?

Ready to shape the future of DeepRead? Join the co-development community.

What you get:

Your committment: