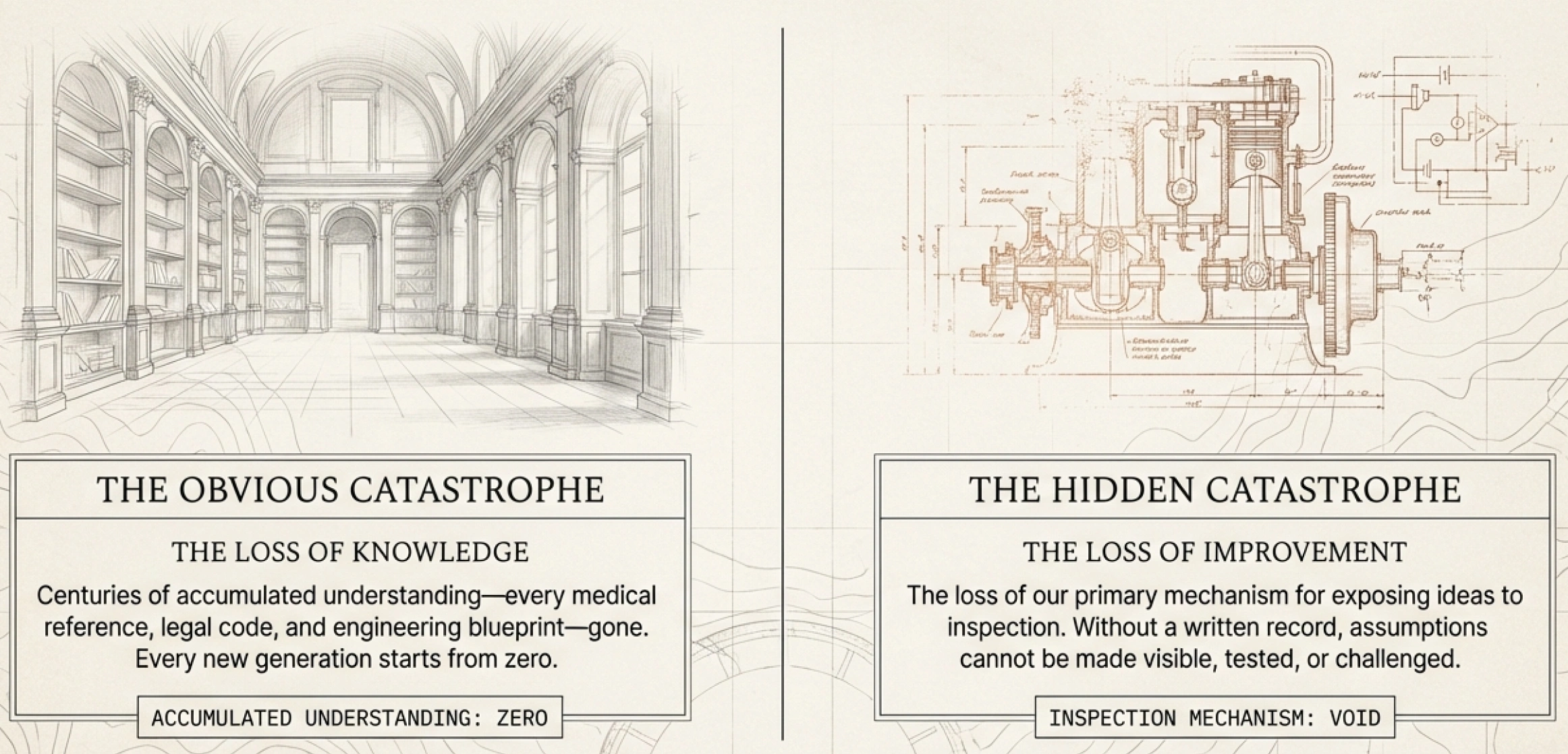

Think about what would happen if every book disappeared overnight. Not just the physical objects — the content. Every textbook, every legal code, every medical reference, every record of how we built what we've built.

The obvious catastrophe is the loss of knowledge. Centuries of accumulated understanding, gone. We'd have to rediscover everything from scratch — how diseases spread, how bridges hold weight, how economies function. This is the first thing books do for society: they preserve what previous generations have learned so that each new generation doesn't start from zero.

But there's a second catastrophe that's less obvious. If every book disappeared, we'd also lose our primary mechanism for improving what we know. Because books don't just store ideas — they make ideas available for inspection. When a medical theory is written down, another doctor can read it, find the flaw, and propose a correction. When a legal argument is published, others can challenge it. When an economic model is laid out in full, its assumptions become visible and testable.

Books do two things for society. They preserve knowledge — preventing us from sliding back into ignorance. And they provide the medium through which knowledge gets challenged, corrected, and grown.

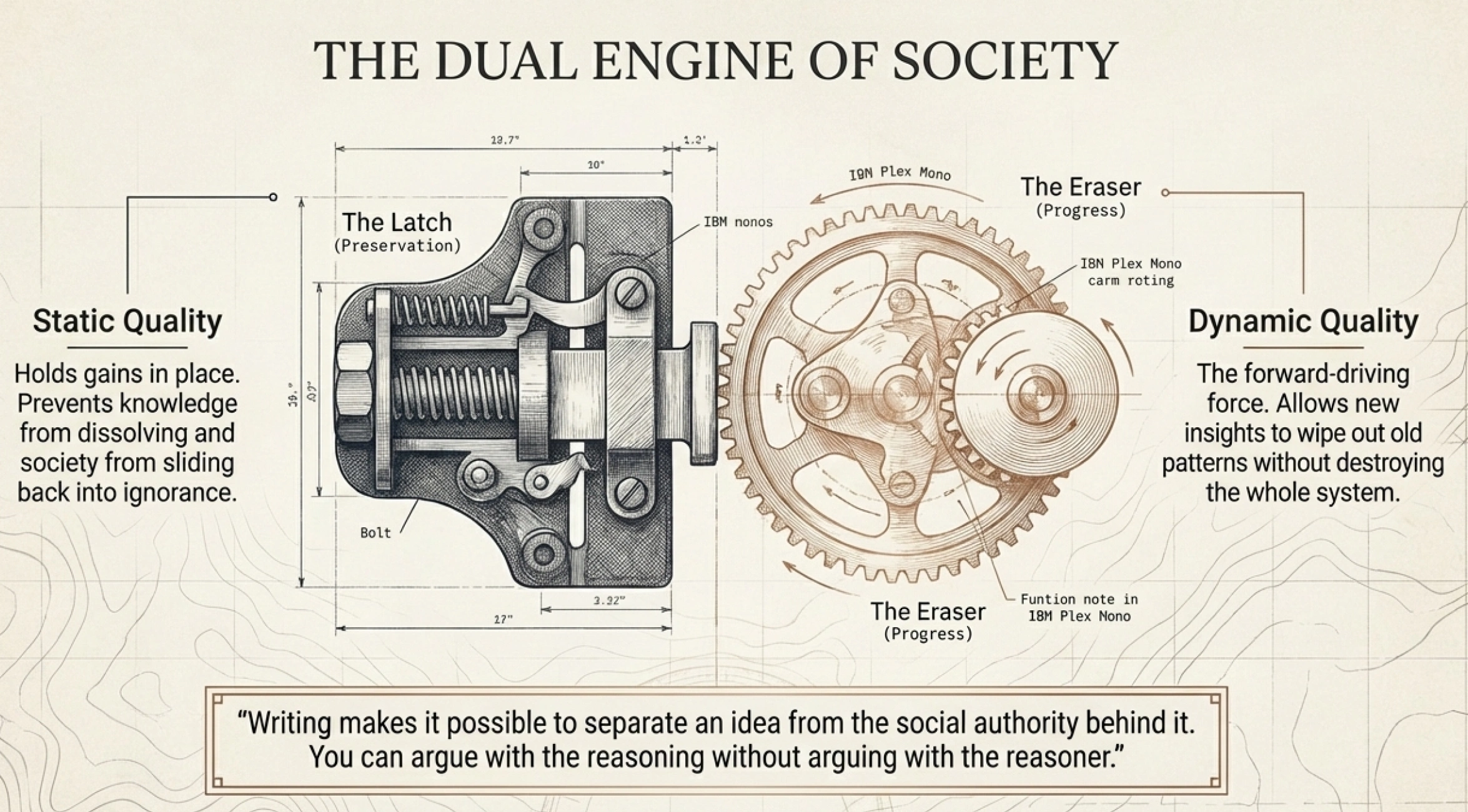

Robert Pirsig, the author of Zen and the Art of Motorcycle Maintenance, gave these two forces useful names in his follow-up book Lila. He called them static quality and Dynamic quality. Static quality is the latching mechanism — what holds gains in place and prevents them from dissolving. Dynamic quality is the forward-driving force — what pushes beyond what already exists.

Neither static nor Dynamic Quality can survive without the other. Robert Pirsig, Lila

A society that only preserves knowledge stagnates. A society that only chases new ideas without preserving them collapses into chaos with every generation. Books are the technology that serves both sides at once.

Consider how this works in science. A scientific paper records a finding — that's the static function, the preservation. But the same paper also exposes its methods and reasoning to the entire scientific community, inviting scrutiny, replication, and challenge — that's the Dynamic function. Pirsig captured this elegantly:

Science always contains an eraser, a mechanism whereby new Dynamic insight could wipe out old static patterns without destroying science itself. Thus science, unlike orthodox theology, has been capable of continuous, evolutionary growth. Robert Pirsig, Lila

That eraser — the ability to correct and replace ideas without destroying the whole system — depends on writing. When ideas exist only as spoken tradition, they're fused with the authority of whoever speaks them. Challenging the idea means challenging the person. But once an idea is written down, it stands on its own. You can argue with the reasoning without arguing with the reasoner. Writing is what makes it possible to separate an idea from the social authority behind it — and that separation is what allows genuine criticism and progress.

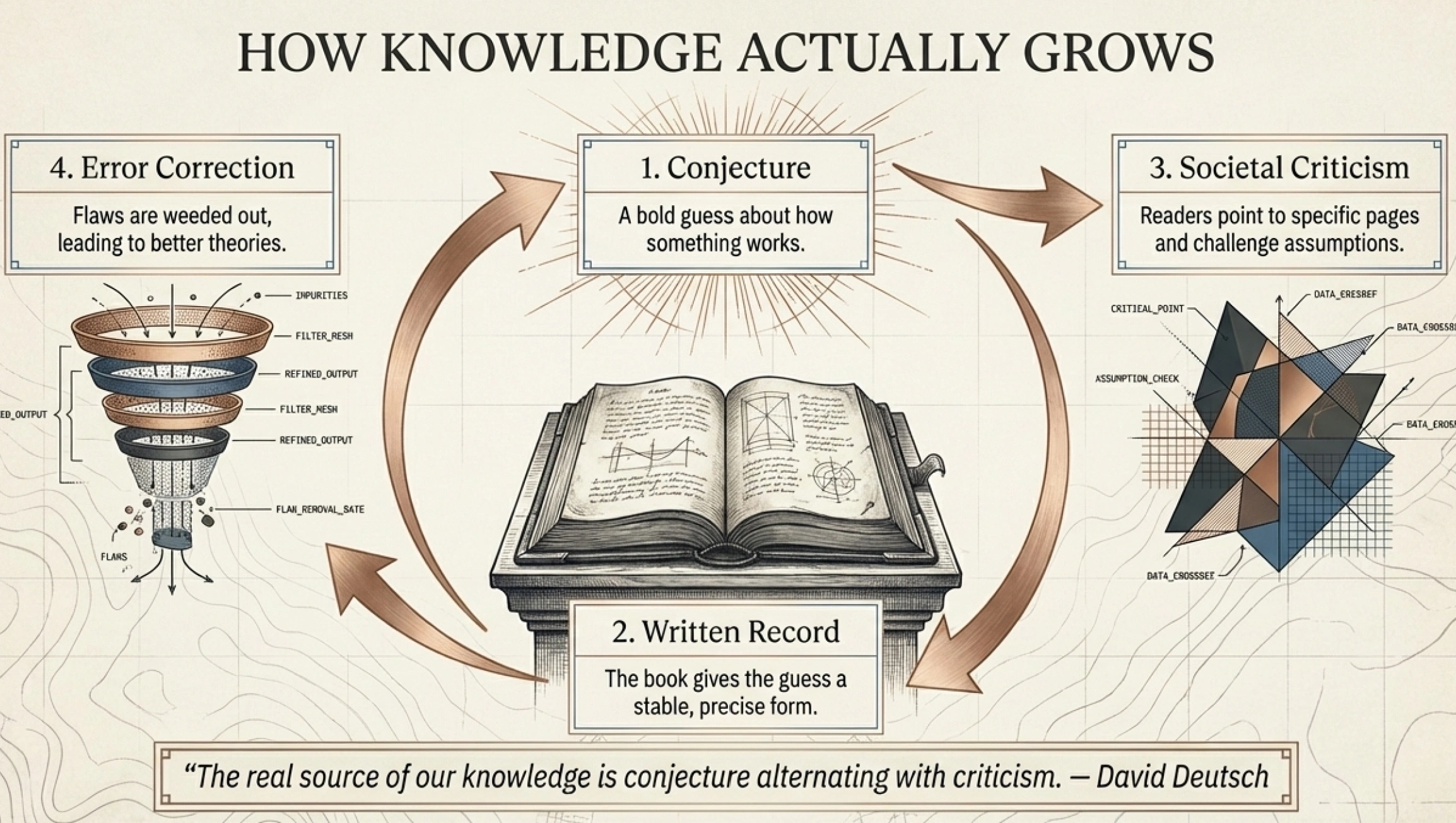

The physicist David Deutsch, in The Beginning of Infinity, articulated why this matters so deeply. He argues that all knowledge grows through a specific process: someone proposes an explanation — a conjecture, a guess about how something works — and others try to find errors in it. The explanations that survive criticism are the ones worth keeping. The ones that don't get replaced by better ones.

The real source of our theories is conjecture, and the real source of our knowledge is conjecture alternating with criticism. David Deutsch, The Beginning of Infinity

Books are the medium that makes this cycle possible at scale. They give conjectures a stable, transmissible form. They make criticism precise — you can point to page 47 and say "this assumption doesn't hold." And they accumulate: each generation builds on what the previous one established, challenges what seems wrong, and pushes further.

So that's the double function. Books as society's memory — the latching mechanism that prevents knowledge from being lost. And books as society's engine of progress — the medium through which knowledge gets tested, corrected, and extended.

Now a new force has entered this picture.

Over the past few years, large language models have become extraordinarily capable at working with text. They can produce it, summarize it, search it, recombine it, and translate it at speeds and scales no human can match. They have access to essentially all recorded human knowledge in written form. Billions of dollars and some of the sharpest minds in technology are devoted to making them better.

What does this mean for the two things books do?

On the preservation side, the picture is encouraging. Our capacity to store, search, retrieve, and filter knowledge has grown enormously. More text is captured than ever. Finding relevant explanations on specific questions is faster and more precise than it has ever been. The latching mechanism — society's ability to hold on to what it knows — is stronger than it was before AI.

On the knowledge-creation side, things get more interesting. We now have a force that can manipulate language at superhuman speed, with access to all existing written knowledge. If books have been our primary medium for growing knowledge through conjecture and criticism, then a question becomes unavoidable: does this new force create new knowledge on its own?

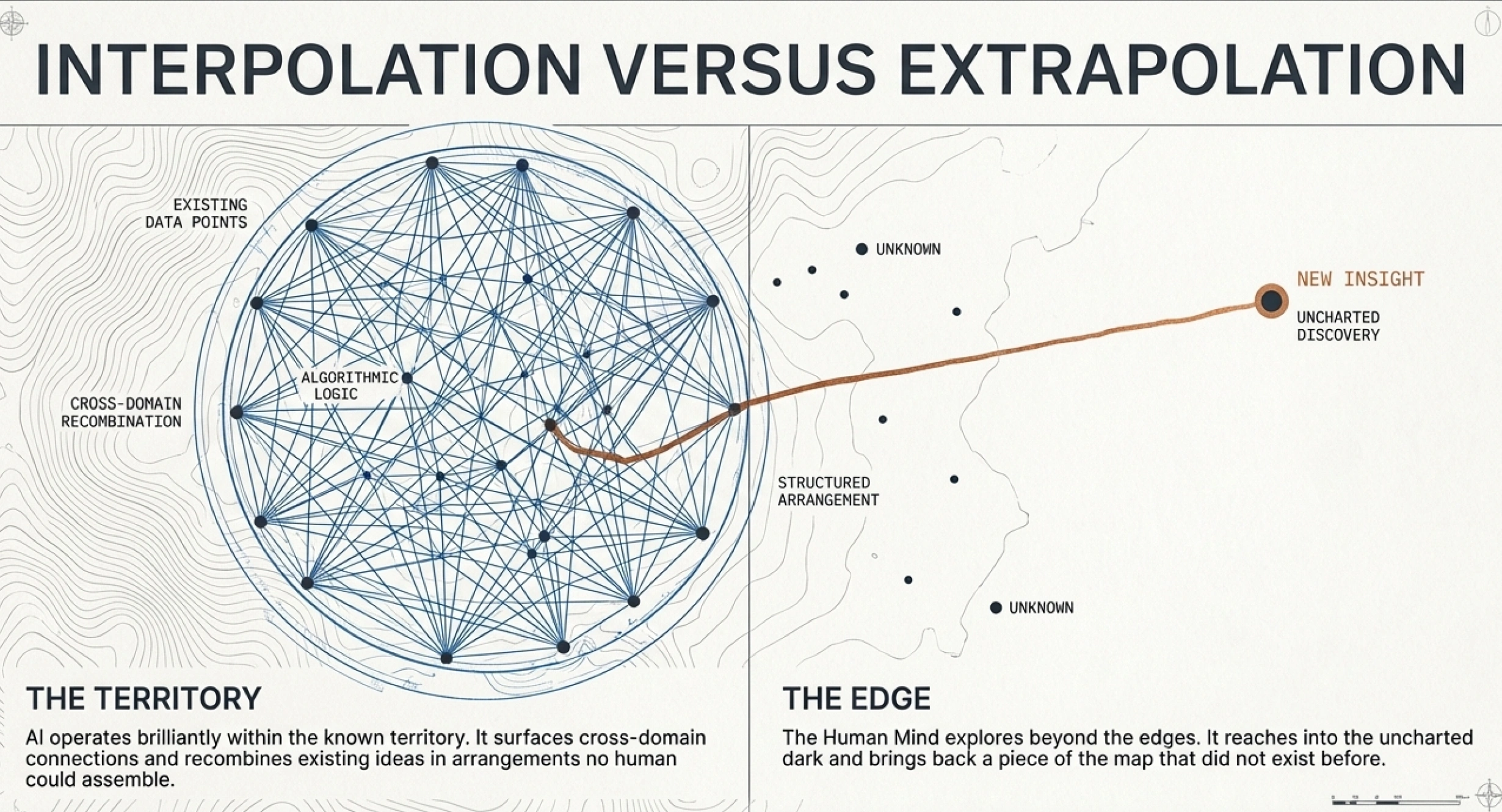

Here's a useful way to think about it. Imagine all existing human knowledge — every theory, every explanation, every working model — as territory on a map. Human thinking, at its best, explores beyond the edges of that territory. It reaches into the unknown and occasionally brings back something genuinely new. A piece of the map that didn't exist before.

AI operates brilliantly within the territory. It finds paths between known locations that humans might have missed. It surfaces connections across domains. It combines existing ideas in arrangements no single person might have assembled. All of this is useful. But the question is whether it also extends the edges — whether it discovers genuinely new territory.

The evidence so far points in a clear direction. Consider the three most celebrated examples of AI contributing to science.

AlphaFold predicts the three-dimensional structure of proteins — a problem that had stumped biology for decades. It won a share of the 2024 Nobel Prize in Chemistry and has generated over 200 million structural predictions. Remarkable. But as structural biologists have noted, AlphaFold works by recognizing patterns in existing data. It predicts what a protein's structure will be. It doesn't explain why proteins fold the way they do. Prediction within the known territory, not a new explanation.

FunSearch, developed by Google DeepMind, found genuinely new solutions to a long-standing open problem in mathematics. The solutions were verifiable and previously unknown — the strongest counterargument to the idea that AI can't produce anything new. But look at the mechanism: an LLM generated millions of candidate solutions, an automated evaluator checked each one, and the best were fed back in. Over days and millions of attempts, a new solution emerged. Evolutionary search at massive scale — powerful, but not the same as an explanation of why the problem has the structure it does.

AI Co-Scientist, Google's multi-agent system for generating scientific hypotheses, helped identify drugs that could be repurposed for treating liver fibrosis. At one research institution, it produced in days the same hypothesis that a human team had taken years to develop. The key word: same. It reproduced an existing human insight, faster.

The pattern across all three: AI found solutions within well-defined problem spaces, or it reproduced what human scientists had already discovered. In every case, the map got filled in with extraordinary speed and precision. But no new territory was charted.

This is worth sitting with. We have a language force of unprecedented power. It has access to all written human knowledge. Enormous intelligence and resources go into its improvement every day. And it has not produced a single genuinely new explanation — no new theory of how something works, no reconceptualization of a problem that changed how we think about it.

Why?

There is no simple answer. In fact, the honest position is that we don't fully understand how new explanations are created in the first place — by anyone, human or machine. But three differences between how AI processes information and how human minds generate new knowledge seem to matter.

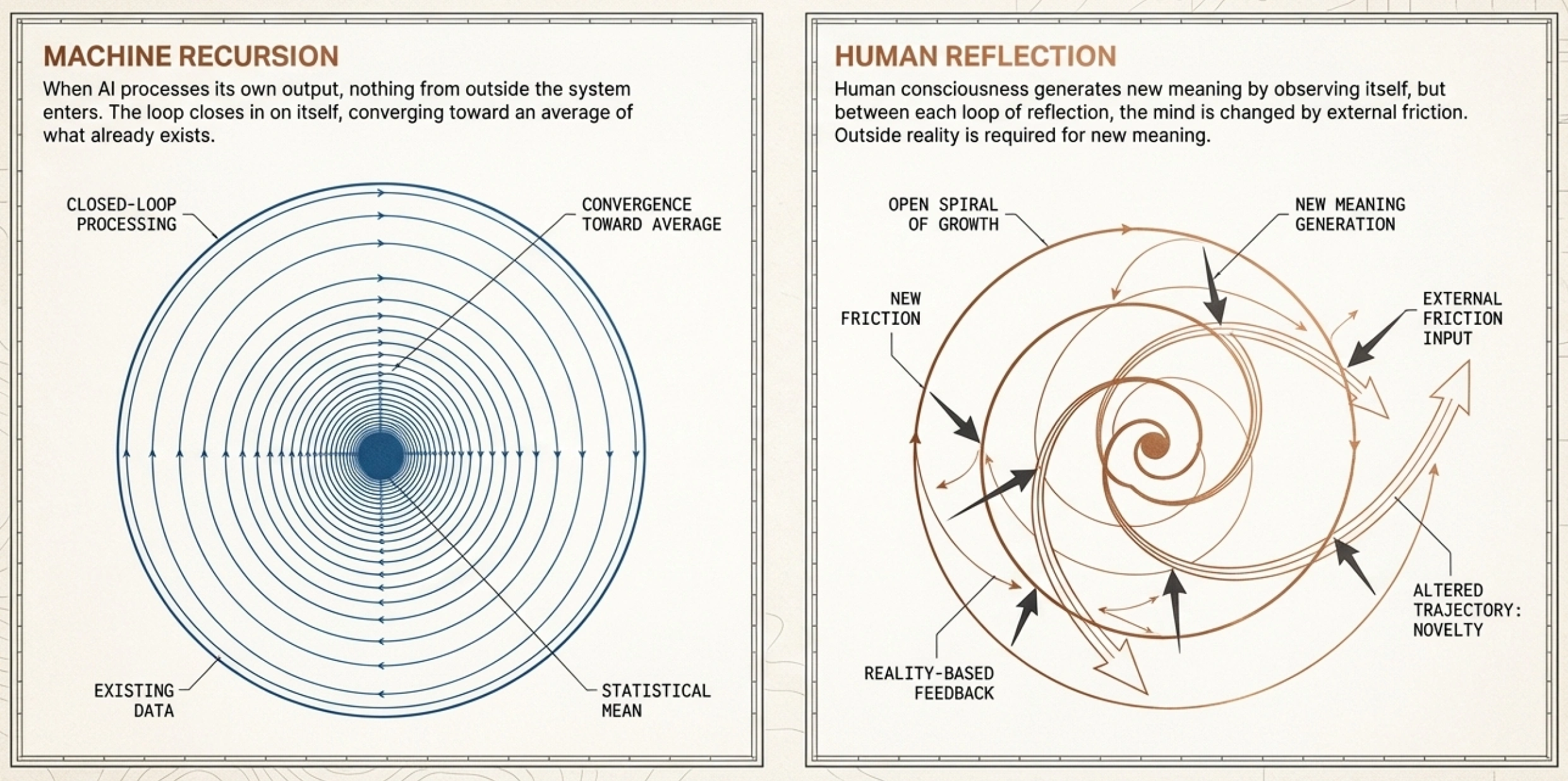

Contact with reality. When a scientist conjectures, they're working on a problem that exists in the physical world. The experiment fails. The bridge doesn't hold. The prediction turns out wrong. Reality pushes back, and that pushback is essential — it's how bad explanations get weeded out. AI doesn't have this contact. It processes text about reality, but reality itself never enters the loop. Its feedback comes from other text, from whether its output matches the statistical patterns in what humans have already written.

What it optimizes for. Large language models are built to predict the most probable next word given everything before it. This is a fundamentally conservative goal. The better the model gets at its job, the more faithfully it reproduces the statistical center of existing human text. A scientist, on the other hand, sometimes finds that the answer which actually works is the wildly improbable one — the idea that nobody has had before, the one that would score lowest on a "most likely next thought" test.

What happens when it feeds back into itself. Douglas Hofstadter, in I Am a Strange Loop, explored the idea that consciousness involves a kind of self-referential loop — a system that generates new levels of meaning through the process of observing itself. Human thinking seems to involve something like this: you reflect on your own ideas, but between each round of reflection you've been changed by your encounters with the world. Each pass through the loop encounters genuinely new material from outside. When AI processes its own output, by contrast, nothing from outside the system has entered. The loop closes in on itself. Instead of generating new levels of meaning, it converges toward the average of what already exists.

These three observations don't fully solve the mystery. They describe conditions that appear necessary for knowledge creation but might not be sufficient. As Deutsch himself has acknowledged, how bold conjectures actually arise — how a human mind leaps beyond the known — remains one of the great unsolved problems. We can point to the conditions that seem to support it, but we cannot explain the mechanism.

What we can do is take the evidence seriously: unprecedented language power, access to all human knowledge, massive investment — and no new explanations. That pattern tells us something important about where new knowledge actually comes from.

This doesn't mean AI is useless for knowledge creation. Far from it. What it means is that AI's role is catalytic — it accelerates and supports the process, but it doesn't replace the essential ingredient, which is a human mind engaged with a real problem.

AI helps articulate half-formed thoughts. It surfaces connections across domains that a single mind might miss. It serves as a criticism partner, challenging arguments and pointing out what a line of reasoning overlooks. It lowers the barrier to expressing rough ideas — which means more conjectures get made, more attempts at explanation get tried. In Deutsch's framework, more conjectures subjected to rigorous criticism means faster progress.

AI amplifies whatever process it's attached to. Attached to a thinking, conjecturing mind, it accelerates discovery. Left to run on its own, it polishes the center of the existing map and calls it exploration.

The catalyst works. But only when there's something to catalyze.

And this is why books remain essential for society — not as nostalgic objects, but as a practical necessity for a civilization that wants to keep growing its understanding of the world.

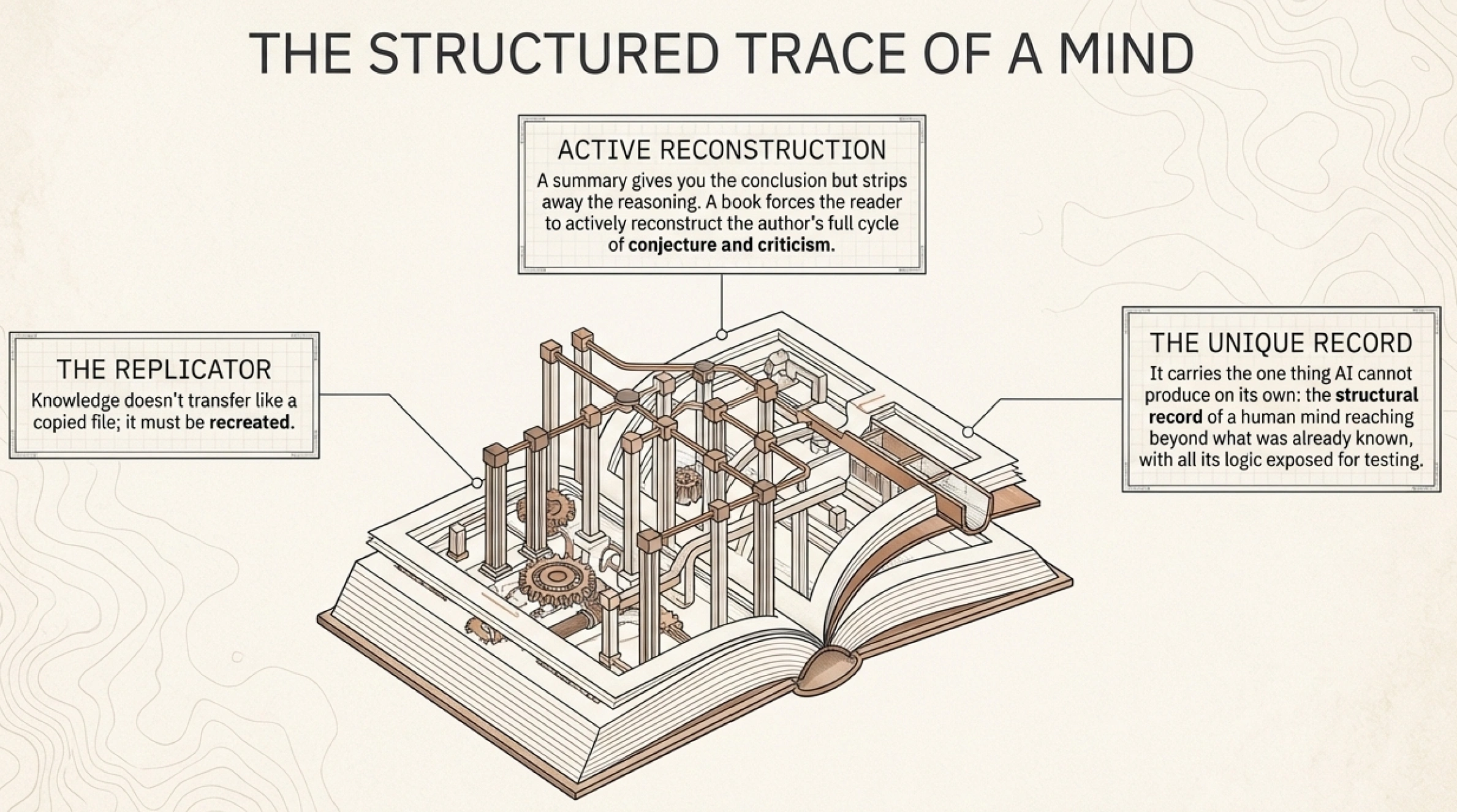

If new knowledge requires minds that are grounded in reality and engaged with real problems, then books are the artifacts of exactly that process. A book is the structured trace of a mind that went through the full cycle of conjecture and criticism while genuinely trying to explain something. When you read one deeply, you don't just receive information. You reconstruct the author's reasoning in your own mind — and that reconstruction is what enables you to then go further, to challenge it, extend it, or build on it.

Deutsch makes this point with precision. Knowledge doesn't transfer intact like a file being copied. It gets recreated:

Unlike genes, many memes take different physical forms every time they are replicated. People rarely express ideas in exactly the same words in which they heard them. Yet we rightly call what is transmitted the same idea — the same meme — throughout. Thus the real replicator is abstract: it is the knowledge itself. David Deutsch, The Beginning of Infinity

The knowledge replicates faithfully because the reader reconstructs the explanation — actively rebuilds it. This takes depth. A summary gives you the conclusion but strips away the reasoning that makes the conclusion testable and improvable. A book gives you the full argument, with all its structure exposed, available for criticism. That's what keeps the cycle going.

Books carry the one thing this new language force cannot produce on its own: the record of genuine human conjecture — the structured trace of minds that were reaching beyond what was already known.

Throughout this series, we've explored how AI is reshaping every stage of the book's life — from format and creation to authority and curation, and consumption. Each stage is changing. But the reason books exist for a society — as the medium through which knowledge gets preserved and new knowledge gets created — persists.

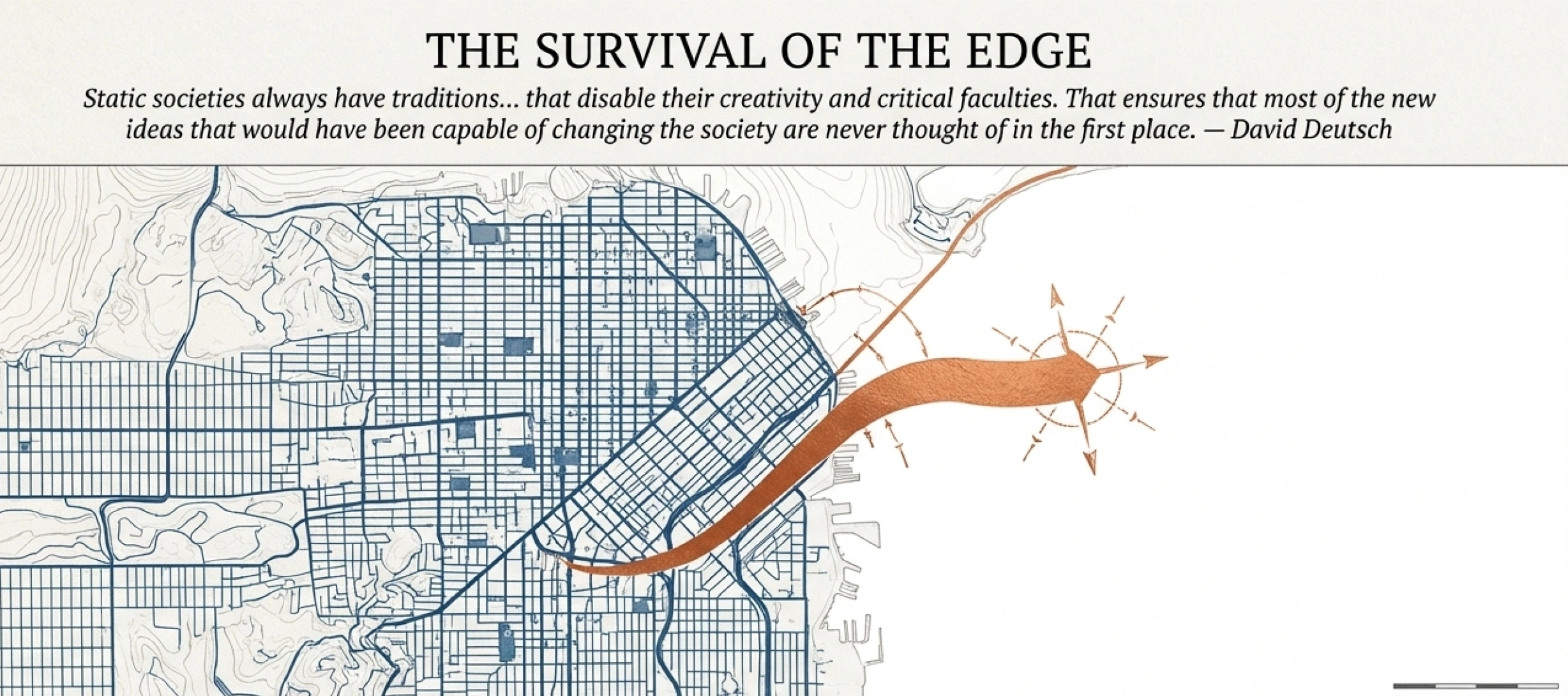

Deutsch once described what happens in societies that let this process wither:

Static societies always have traditions of bringing up children in ways that disable their creativity and critical faculties. That ensures that most of the new ideas that would have been capable of changing the society are never thought of in the first place. David Deutsch, The Beginning of Infinity

The risk he describes is not that new ideas get banned. It's that the conditions for their creation quietly erode. A society that replaces deep engagement with summaries, that consumes AI-generated averages of existing thought instead of working through genuine explanations, hasn't outlawed knowledge creation. It has just made it less likely to happen.

Books are how a society remembers what it knows and continues to learn what it doesn't. That is why society still needs them.

Ready to shape the future of DeepRead? Join the co-development community.

What you get:

Your committment: