This is the first article in a series about the future of the book. I want to examine a question that sounds simple but isn't: what happens to the oldest knowledge technology (books and reading) when it collides with the newest (LLMs and AI)?

To answer that, I need a way of thinking about what books actually are — not as objects, but as a technology for transferring understanding between minds. And here's where things get interestingly self-referential: the best framework I've found for this comes from David Deutsch's The Beginning of Infinity. A book. A chain of words I read, which changed how I think, and which I'm now using to examine the future of books themselves. If that isn't evidence that the technology works, I don't know what is.

Deutsch picks up Dawkins' concept of meme replication — not internet memes, but ideas that travel from mind to mind. His key insight is that an explanation can't simply be copied. It has to be regenerated by the receiver. When you read a book, you don't download a file. You reconstruct something in your own mind, shaped by your own existing knowledge and experience. The author encodes their thinking into structure and language. You decode it. But what you end up with isn't a copy — it's a new version, rebuilt from the ground up in your own mental landscape.

"The learners, through criticism of their initial guesses and with the help of their books, teachers and colleagues, seeking a viable explanation, will arrive at the same theory as the originator." -- David Deutsch, The Beginning of Infinity

A book, then, is a technology for entering another mind — a structured journey through someone's thinking, designed to rebuild an understanding in yours. It's not information storage. It's not a database you query. It's a deliberate sequence of ideas laid out to guide you through someone else's observations, reasoning, and conclusions, so that you can reconstruct them yourself.

This matters because it tells us what we're really asking when we ask about the future of books. We're not asking whether bound paper survives. We're asking whether this particular technology for mind-to-mind knowledge transfer — structured, sequential text — will remain the best tool for the job. Will we still read books to learn new knowledge? Or is something better coming?

If a book is a technology for regenerating understanding, then the future of that technology can be examined through each stage of how an explanation actually travels from one mind to another. Think of it as a chain:

Format → Creation → Authority → Curation → Consumption → Social

Each link raises its own questions. Format asks: what shape should the explanation take? Creation asks: what happens when producing polished text becomes effortless? Authority asks: how do we trust a book when anyone — or any machine — can write one? Curation asks: how does the right book find the right reader? Consumption asks: does deep reading survive when shortcuts are everywhere? And Social asks: what happens to books as shared reference points, conversation starters, cultural currency?

This series will work through each stage. But we start with Format — because it's the most fundamental question, and also the one where the answer might surprise you.

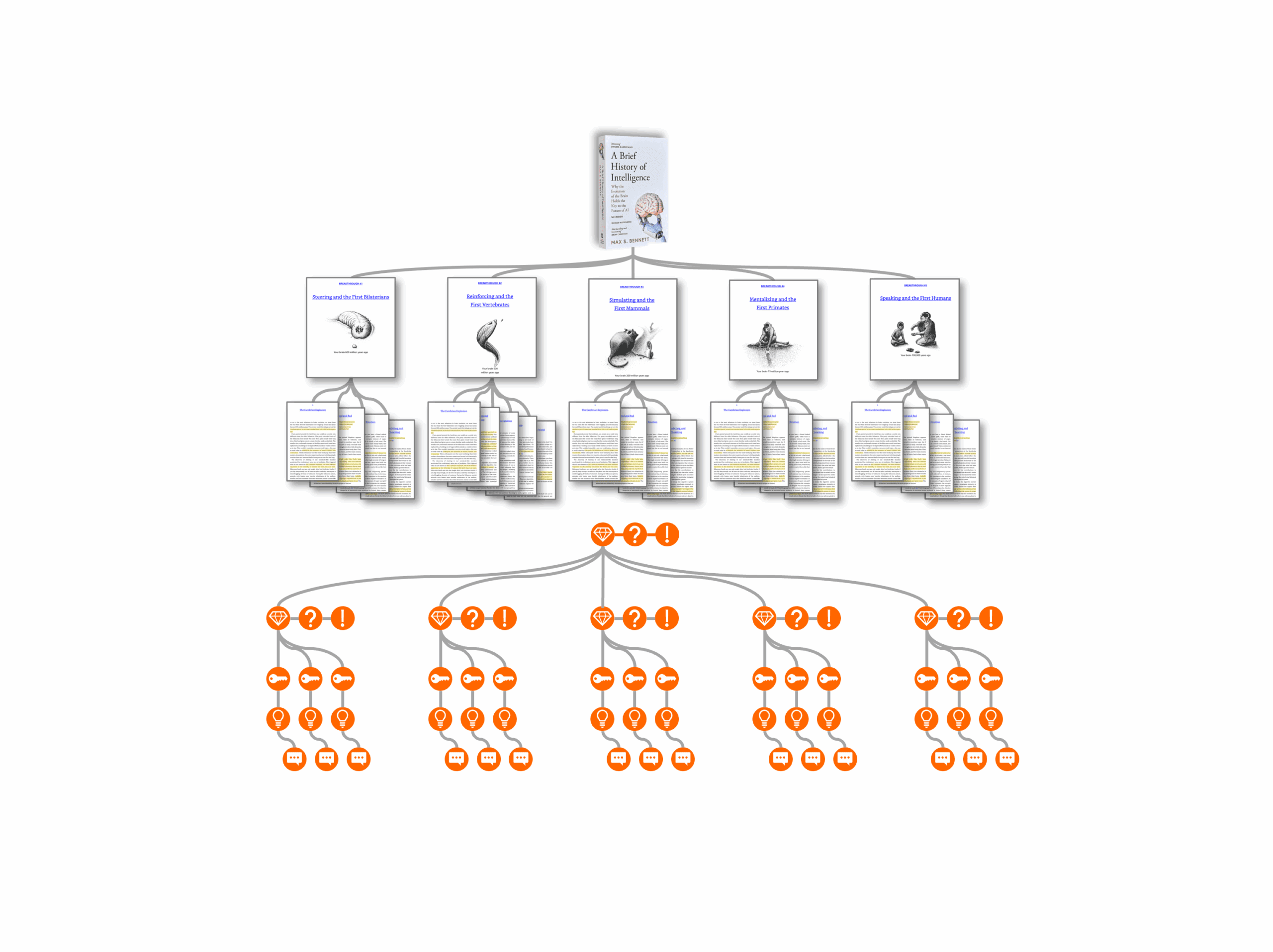

Personally, I've spent most of my time exploring the Consumption side of this chain — examining how readers can more deeply absorb a book's insights and perspectives, and building tools that support that process.

That work, and what we're currently exploring with the new DeepRead Pro co-development community, is my best attempt at a concrete answer to how the consumption step can become an active act of personal development rather than passive absorption.

But before we can talk about how reading might evolve, we need to understand whether the thing being read — text itself — has a future.

Here is something nobody predicted. After decades of moving toward visual interfaces — dashboards, apps, swipeable cards, infinite-scrolling feeds — the most advanced technology humans have ever created communicates primarily through the oldest medium we have. Text. Written words. Reading and writing.

When you interact with an AI, you type words. It responds with words. You read them top to bottom, left to right, exactly as you would read a book. The dominant interface for our most sophisticated technology is, essentially, a conversation conducted in written language.

This is, to put it mildly, ironic. For years, the direction of travel in software was away from text — toward visual, spatial, gestural interfaces. Touch screens. Voice assistants. Drag-and-drop. The assumption was that text was a limitation we'd eventually transcend. And then AI arrived, and text came roaring back.

But is this revival permanent? Or is it a brief, weird moment — a temporary return to the oldest interface before the next leap takes us somewhere entirely different? Will students twenty years from now still carry books — physical or digital — to learn from?

To take the hardest version of this question seriously, consider what Andrej Karpathy — one of the most respected voices in AI — recently argued about the future of software itself.

"It shouldn't maintain some human-readable frontend and my LLM agent shouldn't have to reverse engineer it." -- Andrej Karpathy

Karpathy's argument is that the entire concept of human-facing software is becoming obsolete. Apps, dashboards, websites — these are all interfaces designed for humans to read and interact with. But if AI agents can handle tasks directly through APIs and command-line interfaces, why maintain the human-readable layer at all? It's unnecessary overhead.

He puts it bluntly:

"They give you a list of instructions on a webpage to open this or that URL and click here or there to do a thing. In 2026. What am I a computer? You do it." -- Andrej Karpathy

If Karpathy is right, the human-readable frontend — which includes text — becomes unnecessary for an entire class of interactions. The software industry reconfigures into services with "agent-native ergonomics," and humans stop needing to read instructions, navigate menus, or parse interfaces at all. Where interfaces are still needed, Karpathy envisions them generated on demand by AI agents — fully context-driven, completely personalized, and disposable. No more pre-configured apps. Just temporary surfaces that appear when you need them and disappear when you don't.

This is a serious argument. And it raises an uncomfortable question for anyone who cares about books: if apps dissolve into agent-native services, does the book dissolve too?

I think the answer is no. But the reason why it's not a no you can dismiss easily reveals something important about what text actually does.

Karpathy is talking about text-as-interface — text that triggers actions, conveys instructions, mediates between you and a service. That kind of text probably is on its way out. You don't need to read a webpage to book a flight if your AI agent can do it directly. You don't need to parse a settings menu if the agent understands what you want.

But a book isn't text-as-interface. A book is text-as-thought-vehicle. It doesn't trigger actions. It transmits understanding. Its purpose isn't to help you do something — it's to help you think something you couldn't think before.

These are fundamentally different use cases. Karpathy's argument that pre-configured apps will disappear and be replaced by custom interfaces generated on the fly is convincing — but it simply doesn't apply to books. A book's job isn't to mediate a task. It's to rebuild reasoning. The question for text-as-interface is: "Can an agent do this faster?" The question for text-as-thought-vehicle is entirely different: "Can anything else rebuild someone's reasoning in my mind?"

That second question — will we still need to read in order to deeply understand? — is the one that matters for the future of the book. And answering it requires understanding why text has such a deep grip on how we communicate knowledge.

Thinking is sequential. Text is the only medium that naturally mirrors that sequential structure.

An argument has premises that lead to conclusions. A chain of reasoning unfolds step by step. You can't grasp step three without step two. When an author takes you through their thinking, they're controlling the order of encounter — making sure you meet each idea only after you have the foundation to understand it. Text does this naturally, because text is a sequence. One word after another, one sentence after another, one chapter building on the last.

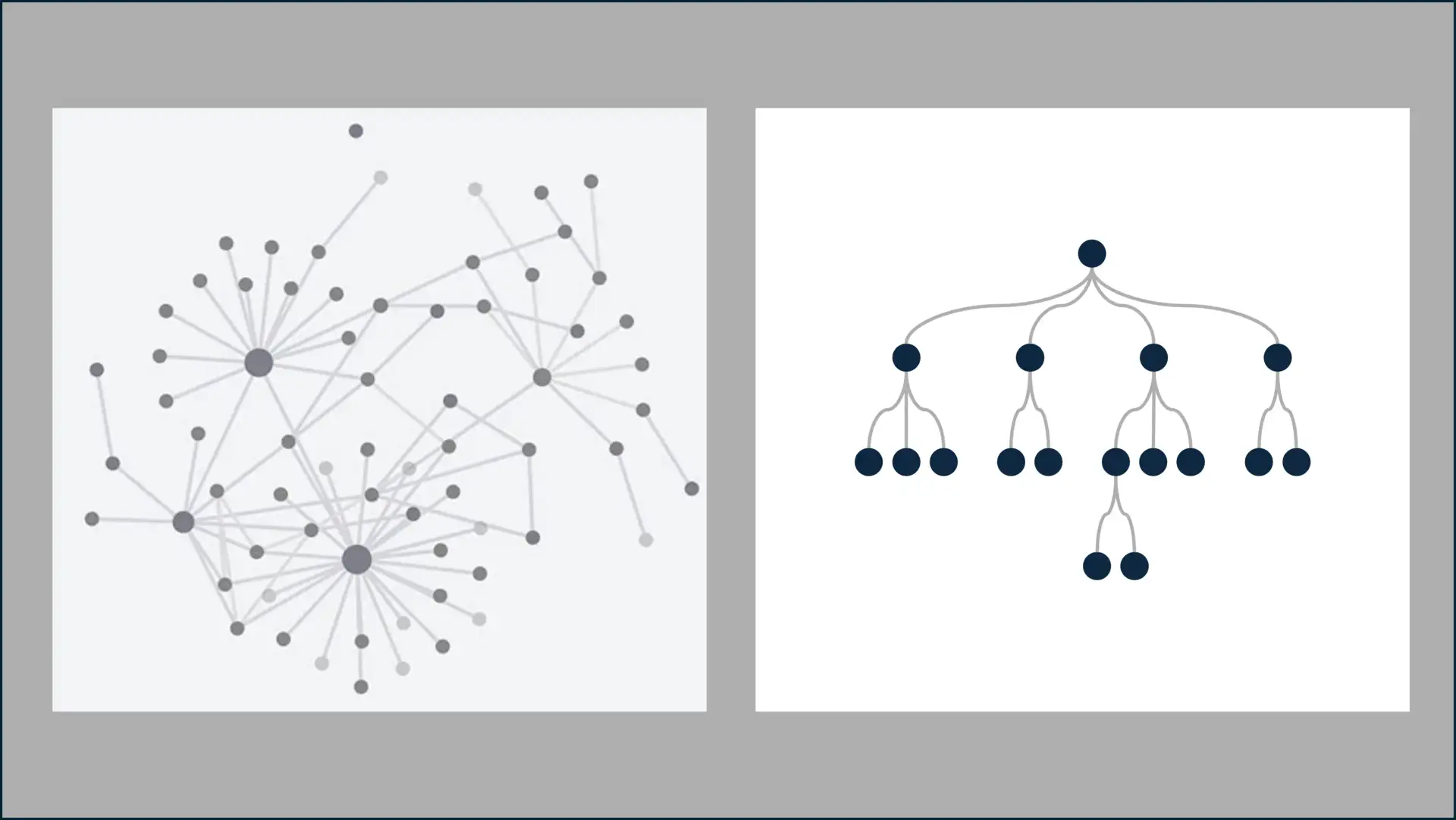

Compare this to a graph, a diagram, or a dashboard. These formats excel at showing relationships and states — how things connect, what the current situation looks like. But they don't naturally encode the journey of arriving at an understanding. A graph can show you that A relates to B relates to C. But it can't easily guide you through why A leads to B, and why that matters before you can grasp C.

This connects to something I've explored in depth elsewhere. Knowledge, I believe, is stored in our minds as a multidimensional network — nodes of concepts connected by associations, much like the graph-based retrieval systems now used in AI.

But knowledge is communicated — and reconstructed — sequentially. To rebuild a network of understanding in someone else's mind, you have to walk them through it one connection at a time, in an order that makes each new connection graspable. The hierarchy is how knowledge travels. This is precisely why books are organized hierarchically — chapters, sections, arguments building on arguments — and why that structure isn't arbitrary but essential to how understanding gets transferred.

Even our most advanced AI architectures confirm that sequence is fundamental. When a vision model processes an image, it performs a linearization step — splitting the image into patches and processing them as a sequence of tokens. The transformer architecture, which powers everything from ChatGPT to image generation, is fundamentally a sequence machine. Even when the input is spatial or multidimensional, the processing reduces to sequential operations.

The most sophisticated technology we've built to process knowledge runs on the same principle as the most ancient: one thing after another, in order.

But why natural language specifically? Why not mathematical notation, or code, or formal logic? These are precise. They're unambiguous. Surely they're better for transferring knowledge?

Douglas Hofstadter, in I Am a Strange Loop and Gödel, Escher, Bach, points toward an answer. Formal systems are powerful precisely because they strip away ambiguity. But that stripping is also their limitation. They can't easily express the messy, context-dependent, self-referential quality of human meaning-making. Natural language is uniquely tolerant of ambiguity, metaphor, and self-reference — and this isn't a bug. It's what makes it capable of expressing things that formal systems can't.

Try explaining to someone why a particular historical event was ironic. Or why a scientific finding should make us rethink an assumption we didn't know we had. Try doing that in a diagram. In an equation. In code. You can't, really — at least not without surrounding it with prose that does the actual explaining.

We can even generalize reading as an act of playing with symbols and bootstrapping new understanding by doing so — whether those symbols are words, mathematical notation, or lines of code. But natural language remains the connective tissue, the thing that explains why the equation matters and what the code is actually doing.

There's a telling development here: the act of programming by humans has moved dramatically closer to natural language — from machine code to assembly to high-level languages to, now, prompting in plain English. You might argue this is simply a concession to human limitations. But Hofstadter would suggest it's deeper than that. Natural language's tolerance for ambiguity and context might be necessary for expressing the kinds of knowledge that matter most for human understanding.

Honesty demands we ask: are there situations where text is not the best format for transferring understanding? Yes. Clearly.

Consider a concept like information density per unit of attention — how much understanding can you transfer for a given investment of the reader's focus? Books are high-density but linear. They demand sustained attention. A well-designed diagram can communicate a system's structure in seconds. A flow chart can make a process visible in ways that paragraphs of description cannot. A graph can reveal patterns that would be invisible in tabular data.

Bret Victor, the interaction designer who coined the term "explorable explanations," makes the strongest case against pure text:

"A typical reading tool, such as a book or website, displays the author's argument, and nothing else. The reader's line of thought remains internal and invisible, vague and speculative." Bret Victor, Explorable Explanations

Victor's answer is interactive documents — text enriched with manipulable models, where the reader can adjust the author's assumptions and see the consequences immediately. For certain kinds of understanding — dynamical systems, feedback loops, emergent behavior — this kind of interactive exploration teaches faster than static text. You develop intuition by playing rather than just reading.

"People currently think of text as information to be consumed. I want text to be used as an environment to think in." Bret Victor, Explorable Explanations

This is compelling. And it points toward a genuine evolution of the book format. But notice what it doesn't do: it doesn't replace text. It enriches it. Victor's explorable explanations still have prose — authored, sequential, guiding the reader through an argument. The interactive elements are embedded within the text, not substituted for it.

UX designer Vitaly Friedman has documented a similar pattern in AI interfaces: even the most sophisticated products are evolving beyond pure chat toward hybrid interfaces — structured inputs, comparison views, direct manipulation of outputs. But the underlying medium remains text-based. It recedes from the foreground, becoming what Friedman calls "quiet AI" — invisible infrastructure rather than the interface itself. Text doesn't disappear. It just stops being the thing you notice.

The pattern that emerges is not "text versus alternatives" but "text as backbone, with alternatives layered on top." Graphs, diagrams, interactive simulations — these are powerful supplements for specific purposes. But they need the sequential scaffolding of text to explain why. The relationship might work like this: text guides you into unfamiliar territory; interaction lets you build intuition once you're there. You read first to understand the landscape, then play to feel how it works.

Text externalizes thinking in a way that makes it criticizable. When someone writes an argument down, you can stop. Reread the sentence. Disagree with it. Compare it to the paragraph before. Check whether the conclusion actually follows from the premises. You can stare at an argument — hold it still and examine it from every angle.

Video and audio don't offer this in the same way. They wash over you. You can pause and rewind, yes, but you can't pin a spoken argument down and push against it with the same precision. Text freezes thinking in place, making it available for the critical scrutiny that Deutsch argues is the engine of all genuine knowledge creation.

Text is therefore a medium that selects for more robust ideas, because it makes them easier to examine and challenge.

Back to our question: in a world where AI can generate, summarize, and even explain ideas for us — will people still pick up a book to learn something new?

The book as a format — structured, sequential text designed to rebuild understanding in the reader's mind — survives. Not because of inertia or nostalgia, but because it maps to something fundamental about how thinking works and how knowledge travels between minds.

Text mirrors the temporal structure of thought. It encodes the order of encounter that makes understanding possible. It tolerates the ambiguity and self-reference that formal systems can't handle. It externalizes thinking in a way that invites the critical scrutiny that separates good ideas from bad ones. And even our most sophisticated AI architectures — the closest thing we have to artificial thinking — are built on the same sequential foundation.

This doesn't mean the book stays frozen in its current form. Supplements will evolve — interactive elements, visual aids, explorable models layered on top of the textual backbone. The containers will change: screens instead of pages, dynamic instead of static, perhaps eventually spatial or immersive. But the core technology — an author's thinking encoded in sequential natural language, designed to be reconstructed by a reader — persists.

The real disruptions, I believe, are not in the format but in the other stages of the chain. What happens when anyone can produce a book effortlessly? How do we trust a text when it might have been generated without understanding? How does the right book find the right reader when the flood of content overwhelms every filter? How does the reader engage deeply when shortcuts are everywhere?

These are the questions for the rest of this series. The format holds. Everything else is up for grabs.

Next in this series: The Future of the Book: Creation — What Happens When the Execution Barrier Drops to Zero?

Ready to shape the future of DeepRead? Join the co-development community.

What you get:

Your committment: