Now we move one link down the chain. If the format endures, what happens to the production of that format when the barrier to creating it drops to near zero?

In the the first article of the series, I argued that text survives as a knowledge technology — because sequential language maps to how thinking works, and because no alternative has improved on that. In the the second article, I looked at what happens when the cost of producing that text drops to near zero: more books, more niche knowledge entering the written record, but no improvement at the very top — because the works that matter most require something AI cannot provide.

That second article ended with a question: when the flood of books arrives, how do you know which ones to trust? That's what this piece is about.

But to answer it properly, I need to start somewhere unexpected — not with the books, but with the decision to read one.

Choosing your next book is one of the heavier media decisions you make. Not because books cost a lot — though they do — but because of what you're committing when you open one. A serious book takes five, eight, twelve hours to read. That's not a passive evening. That's a week of commutes, or several evenings you could have spent differently. And behind every book you pick up is a silent list of books you're not reading instead.

Reading a book is not a consumption decision. It's an investment decision. And like all investment decisions, it is primarily a question of trust.

You're trusting that the author actually knows something you don't. That the time spent will move you somewhere. That what's inside the cover matches what the cover implies. This is why book recommendations have always carried so much weight — and why the question of who to trust has never really been about books at all. It's been about people.

Across every era of publishing — before and after the internet, before and after Amazon, before and after social media — one thing has remained constant. The most reliable way to find your next book is to have someone you trust tell you to read it.

Not a critic. Not an algorithm. Not a bestseller list. A person whose taste you know, who has read the book, and who thinks you specifically should read it. Surveys consistently find that between 47% and 64% of readers rely primarily on personal recommendations — friends, family, colleagues — to decide what to read next. Every other mechanism, however sophisticated, sits below this in actual influence.

This tells you something important: the problem of book discovery has never been about finding good books. It has been about finding a trusted person who has already found them for you.

The entire history of book discovery is a history of different technologies trying to answer that single question at scale: how do you simulate the trusted friend?

For most of the twentieth century, the simulation worked through institutions. Literary critics in respected publications, prize committees, television personalities — these entities earned authority through their track record, their taste, their perceived alignment with a certain reader's sensibility. The most remarkable example is Oprah's Book Club, launched in 1996. Marketing researchers estimated that Oprah's endorsement power was anywhere from 20 to 100 times that of any other media personality — not because she was a credentialed expert, but because millions of readers felt they knew her. Her recommendation felt personal. She functioned, for those readers, like a trusted friend at the scale of a television broadcast.

The internet broke the institutional model. Amazon's "people who bought this also bought" was the first serious algorithmic attempt at the trusted friend: rather than editorial judgment, it used behavioral data. It asked not "what do experts think is good?" but "what did people like you actually buy?" Goodreads, which Amazon acquired in 2013, went further — it built a social layer, letting you see what actual friends and readers with similar taste were reading, rating, and recommending. By 2012, Goodreads was converting browsers into buyers at nearly three times Amazon's own rate. The reason was simple: social proof from people who looked like you converted better than any editorial endorsement.

Then came BookTok. The mechanism shifted again — from behavioral data to emotional proof. When you watch someone cry on camera about a book, you don't need to know anything about their credentials. You trust their reaction. It's the most direct possible simulation of a friend telling you something moved them. The numbers reflect the power of this: BookTok influenced an estimated 59 million US print sales in 2024 alone, and drove print book sales to their highest levels since records began in the early 2000s. In Canada, backlist books trending on BookTok saw sales increases of over 1,000% within three years.

Each of these mechanisms — Oprah, Amazon, Goodreads, BookTok — is a different answer to the same problem. Not "how do we assess book quality?" but "how do we make a stranger feel like a trusted friend?"

"AI is sophisticated high-tech plagiarism." Noam Chomsky

Chomsky's provocation sharpens the stakes. If an AI can produce text that reads exactly like a trusted expert — fluid, confident, well-structured, even citing the right sources — then the textual surface of authority has been completely decoupled from any actual expertise behind it. You can no longer use the quality of the prose as a proxy for the quality of the thinking. The flood of plausible-sounding but potentially hollow books is only growing. Which makes the trusted friend not less important, but more important than ever. The harder it becomes to judge a book by its surface, the more you need someone you trust who has already done that judgment for you.

The most interesting recent developments in book discovery are not trying to solve the authority problem directly. They are moving in a different direction — deeper into the reader rather than deeper into the author.

StoryGraph, the fastest-growing alternative to Goodreads, recommends books based on your mood, your reading pace, your personal history, and your stated preferences. It does not ask "what did critics say about this book?" It asks "what does your reading history say about you, and what book matches that right now?" Fable goes further into social territory — book clubs where you can see other members' highlights and reactions in real time, turning reading into a shared experience rather than a solitary one.

The most structurally interesting move is Spotify's entry into audiobooks. Spotify solved a problem every book platform struggles with: how do you recommend a book to someone who has never read an audiobook before? Their answer was to import signals from music and podcast listening behavior — using what you listen to as a proxy for what you might want to read. The underlying insight is striking: your taste is not compartmentalized by medium. The reader who gravitates toward a certain kind of podcast probably gravitates toward a certain kind of book. Your music preferences predict your literary preferences.

The direction of travel across all these innovations is consistent: the future of book discovery is not about rating books better. It's about knowing readers better. A system that deeply understands your interests, your open questions, the books you've already read, what you found valuable and what left you cold — such a system can make suggestions that feel like they came from a very well-read friend who happens to know you well. The final decision will still be influenced by what the people around you are reading and talking about. But the shortlist gets built from a model of you.

This is the direction we are building toward in DeepRead Pro.

None of this, however, touches the author side of the equation.

Every innovation described above focuses on matching readers to books based on taste and community signals. What remains entirely unsolved is the original authority question: how do you evaluate the person who wrote the book?

Before AI, this was a manageable problem. The effort required to write a book was itself a filter. A published book implied someone had spent months or years with an idea, had gone through editorial review, had committed publicly to a set of arguments. These were imperfect signals, but they were real signals — evidence of investment, of skin in the game. A book with a track record of citations, or an author with a history of published work, gave you something to evaluate.

AI dissolves these signals. Polished, well-structured, apparently authoritative text can now be produced in hours. The credential of "published book" — already weakened by self-publishing — means almost nothing as a quality signal on its own. Anyone can have a book.

What can't be faked is time. And this is where the most interesting model is emerging — not from any platform, but from the authors themselves.

Consider two examples that illustrate a pattern worth paying attention to.

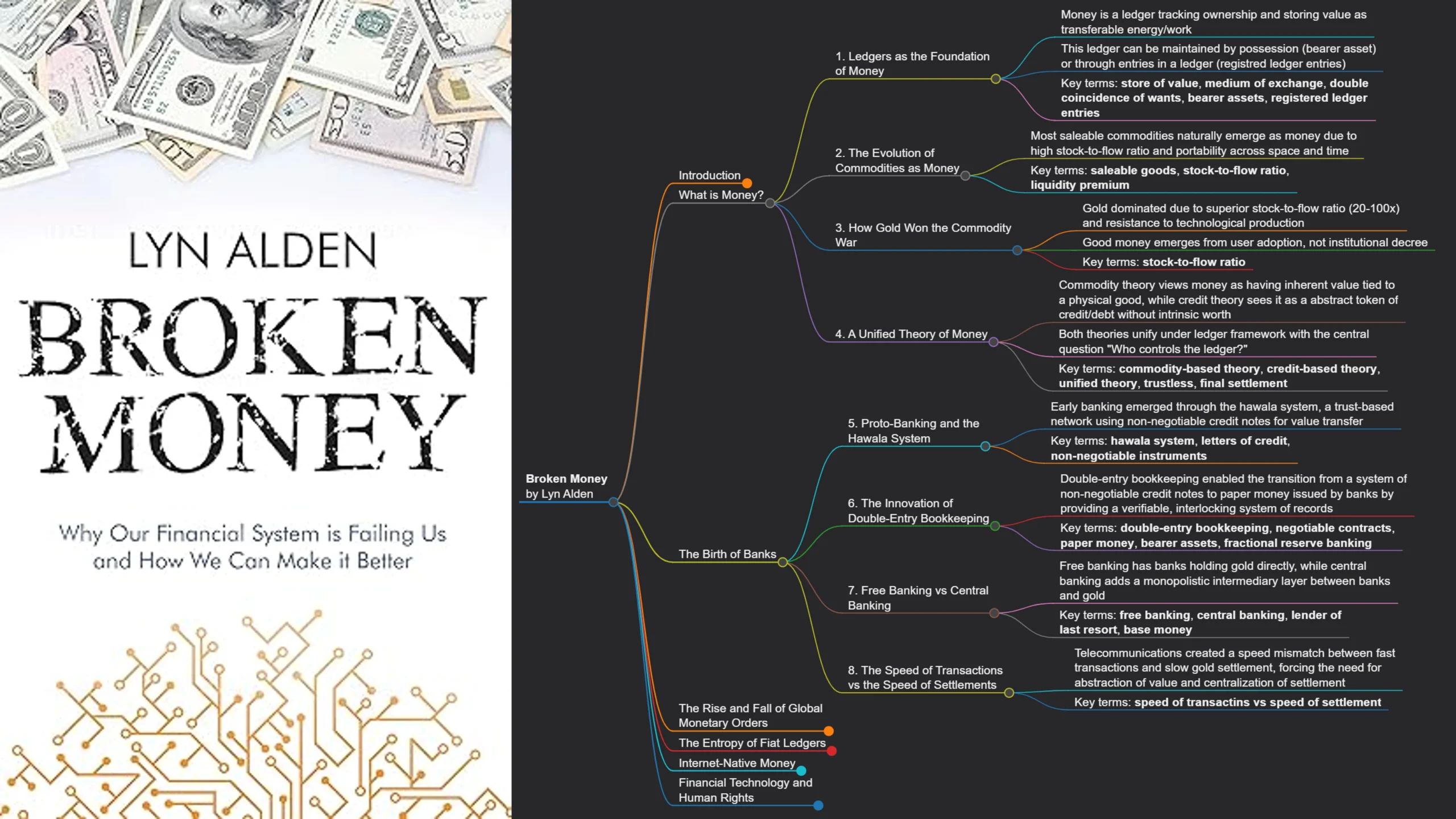

Lyn Alden spent years writing detailed investment analysis, publishing research on monetary systems, speaking on podcasts and at conferences, building a following of people who had come to rely on her thinking about macroeconomics and Bitcoin. When she eventually wrote a book, she was not asking readers to trust an unknown author. She was asking people who already knew her work to read a more comprehensive version of what they had been reading for years. The result was Broken Money — a book that landed with an audience already primed to trust it.

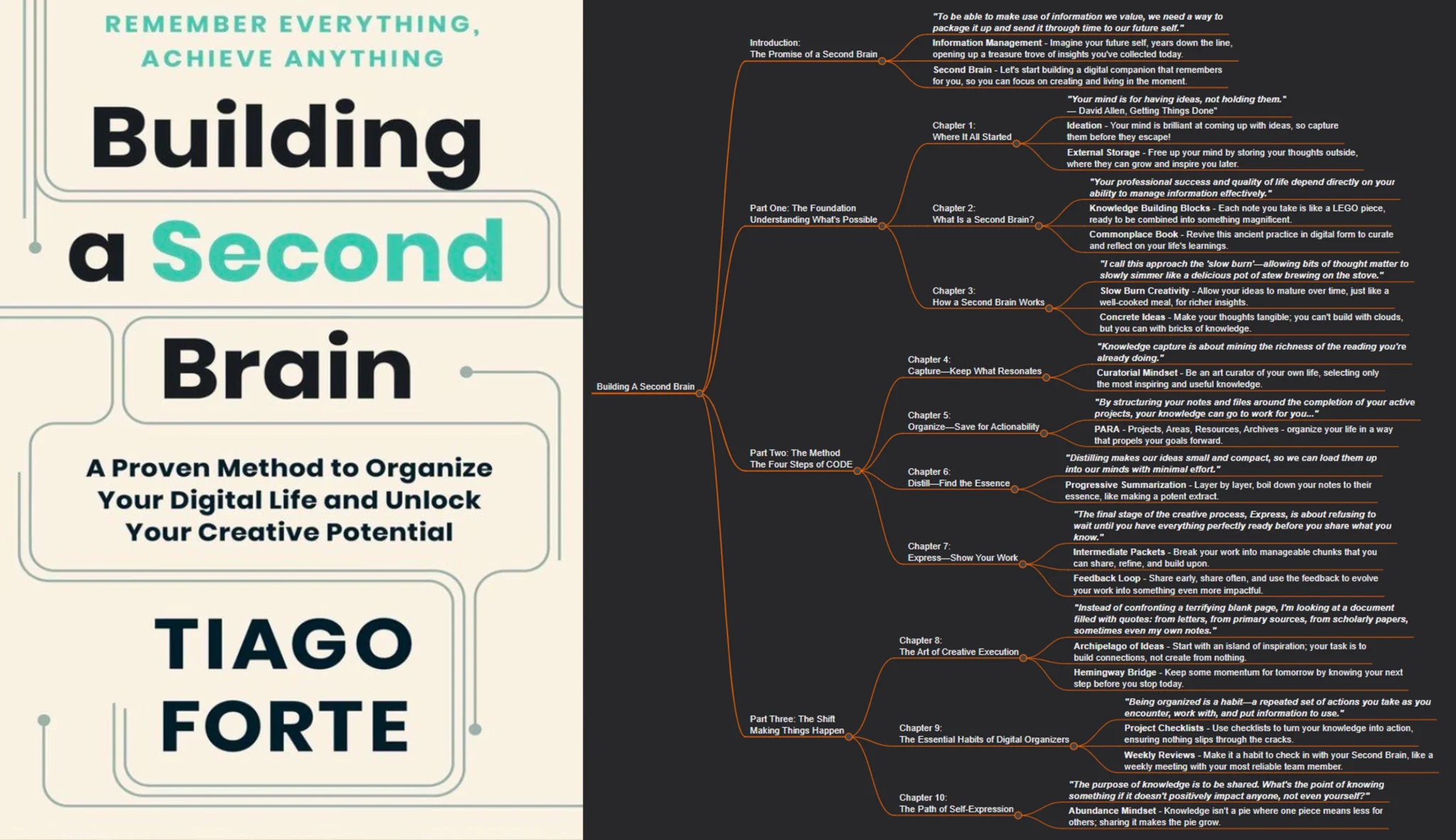

Tiago Forte did something similar. Before Building a Second Brain appeared as a book, it existed as a course, a blog, a community, years of teaching people the same ideas in different formats. The book was not his introduction to readers. It was a crystallization of a relationship that already existed.

In both cases, the book was the culmination of a trust-building process, not its starting point. The reader wasn't being asked to trust a stranger with eight to ten hours of their attention. They were being asked to go deeper with someone they already knew.

In a world where anyone can produce a book, the authors who will be trusted are the ones whose thinking readers have been able to follow over time — not those who appear fully formed on a bookstore shelf.

This is, in a sense, a return to something old. Before mass publishing, an author's reputation was built slowly, through correspondence, through conversation, through reputation spreading gradually among communities of readers. What's new is the infrastructure: newsletters, podcasts, social media, communities — all of these let authors do what Lyn Alden and Tiago Forte did, at much larger scale and much faster pace. An expert who writes consistently, engages with disagreement publicly, updates their views when evidence changes — this person is demonstrating something over time that no polished text can fake.

The practical implication is straightforward, even if the execution takes effort. In a world flooded with AI-assisted books, the question to ask about any book is no longer "does this seem well-written and well-sourced?" — because that bar is now easy to clear artificially. The question is: "do I have evidence that a real mind, committed to these ideas over time, produced this?"

That evidence looks like: a track record of writing or speaking on the subject. A history of being right and wrong in public, and updating accordingly. A community of readers who have engaged with the ideas before the book existed. An author who has something at stake in the argument — who has built their reputation on a position, who has skin in the game.

Word of mouth still closes the deal. A recommendation from someone whose taste you know, who has actually read the book, who can tell you why it's worth your specific hours — that signal is as valuable as it ever was. No algorithm fully replaces it, because no algorithm knows both the book and you as well as a well-read friend does.

What AI will increasingly help with is the shortlisting: learning your reading history, your questions, your intellectual obsessions, and surfacing candidates you might not have found on your own. Think of it as expanding the pool before the trusted friend makes the final call.

The infrastructure of trust is changing. But the underlying need — to find a real mind worth entering, and a reliable guide to help you find it — hasn't changed at all.

The book format survived the first challenge in this series. Creation survived the second — more books, but the ceiling of quality still requires something human. Authority and curation now face a more subtle disruption. The signals we used to rely on are degrading; new ones are emerging that favor demonstrable expertise over time rather than the credential of publication.

But here's what this analysis makes clear: the problem of trust is actually the problem of discovery, and the problem of discovery has always been the problem of the trusted friend. Technology changes the mechanism. The human need it serves stays fixed.

Which brings us to the next question in the chain. Once you have found the right book — once the trust question is resolved and you actually open it — what happens next? The crisis of consumption is not about finding books. It's about whether you're reading them in a way that actually changes how you think.

Ready to shape the future of DeepRead? Join the co-development community.

What you get:

Your committment: